One of the things NVIDIA does in the Voyager Minecraft agent paper is they have it make curriculum for itself of increasingly challenging tasks to complete to learn a skill. Children seem to do something analogous, setting themselves goals to do things like climb obstacles. arxiv.org/abs/2305.16291

We introduce Voyager, the first LLM-powered embodied lifelong learning agent in Minecraft that continuously explores the world, acquires diverse skills, and makes novel discoveries without human...

But ultimately it's not clear to me that these models have a unified sense of the cyberspatial environment and this hobbles their local understanding and intelligence. Much like how if a parent of a blind-deaf child picks them up and carries them too much they never learn a connected environment.

Part of the solution is basic redesign. I'm thinking before the agent can mark a task complete it needs to pass a test suite, and instead of making local evaluations the evaluation stage should contribute to a namespace of tests for that part of the task. This inhibits confabulated completions.

One thing I notice about the weave-agent is it confabulates a lot, is weirdly ingenious when it comes to solving problems but has basic awareness fails and prematurely marks tasks complete, undermining debugging. I suspect playing around generates the data to solve this. minihf.com/posts/2024-0...

This might sound weird but I'm currently thinking about playground design. In particular, the way in which when I was a child I learned basic motor skills, planning, creativity, physical intuition, etc, by wandering around and doing nothing "important". playgroundideas.org/10-principle...

The places children gravitate to for play come in many shapes and forms. An amazing “playground” can look like a wild forested grove, a humble junkyard, or a million dollar artistic sculpture. So what...

Nobody is stopping you from using glue-words, you can just put dash-words together on the fly, it's free the analytic philosophers can't stop you.

I should note by the way that I found the birdsong story not with a search engine but by asking the person who had it in their bio where they got it from. They turned out to be the author.

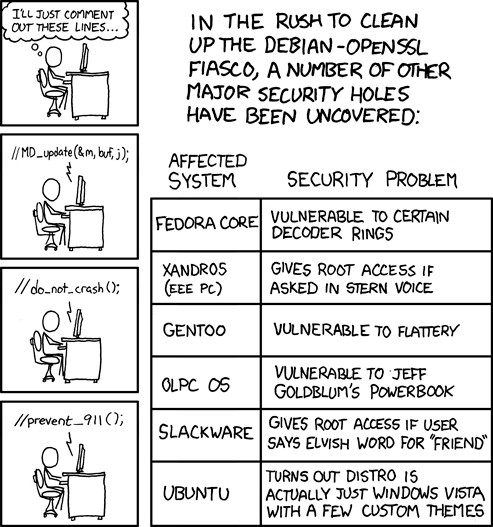

Just realized that in the time between when this was published and now a bunch of these stopped being obviously jokes. If I saw "vulnerable to flattery" in a CVE in 2024 I'm not even sure I'd blink. I'd definitely facepalm, but would I really be surprised?

Tempted to read a critical biography of Henry Ford and Elon Musk back to back because the latter's transformation into the former (derogatory) is kind of astonishing. More importantly it's unexpected to me, which means I have a hole in my world model. What causes this kind of guy exactly?

In fairness the Google LLM integration is mediocre and I don't trust it. One reason I think we're at the low point for AI sentiment is that we're clearly at the height (I hope) of tasteless LLM integrations. People are flinging shit at the wall and hoping it sticks, in the meantime it just stinks.