Vercel Functions are now faster—and powered by Rust. ◆ 30% faster cold starts for smaller workloads ◆ 80ms faster (average) and 500ms faster (p99) for larger workloads Learn more about our rewrite of the core to Rust. vercel.com/blog/vercel-...

Learn about how we've improved startup performance with our Rust-powered functions.

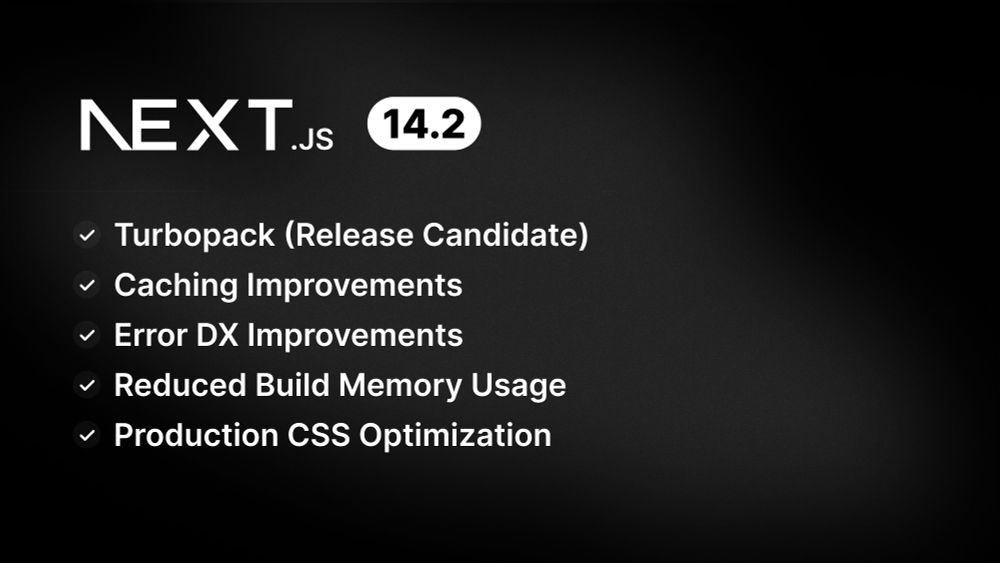

Next.js 14.2 ◆ Turbopack (RC): 99.8% of tests passing for `next dev --turbo` ◆ Build / Production Improvements: Reduced memory usage ◆ Caching Improvements: Configurable client-side cache revalidation ◆ Errors DX: Better hydration mismatch messages nextjs.org/blog/next-14-2

Next.js 14.2 includes development, production, and caching improvements. Including new configuration options, 99% Turbopack tests passing, and more.

We're improving the pricing of our infrastructure products: ◆ Pay exactly for what you use, in granular increments ◆ Lower prices for essentials (bandwidth & functions) ◆ New primitives for easier spend optimization ◆ Our hobby tier remains free vercel.com/blog/improved-infrastructure-pricing

We're reducing pricing on Vercel fundamentals like bandwidth and functions.

Next.js AI Chatbot 2.0 ◆ AI SDK 3.0 with React Server Components ◆ Generative UI support ◆ Updated Shadcn UI and Next.js ◆ Simplified deployment vercel.com/changelog/ne...

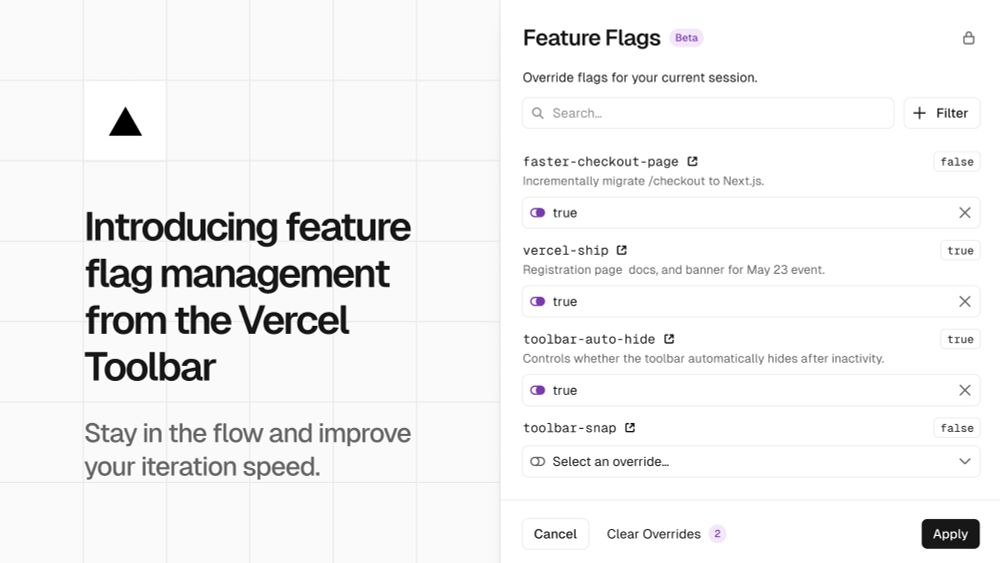

View and override feature flags from the Vercel Toolbar. ◆ Optimizely ◆ LaunchDarkly ◆ Statsig ◆ Split ◆ Hypertune ◆ …or bring your own flags Compatible with any flag provider and framework. vercel.com/blog/toolbar...

Uplevel your flags workflow with the Vercel Toolbar

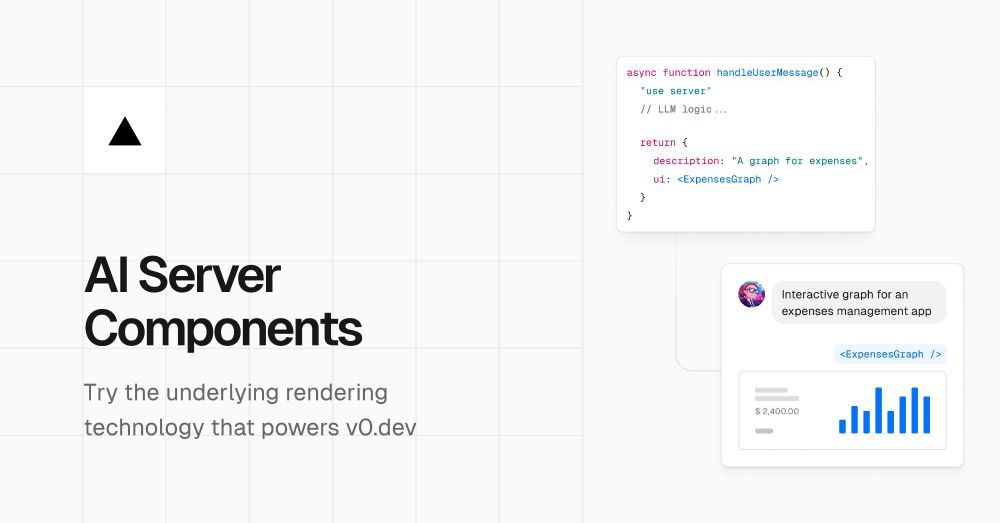

AI SDK 3.0 ◆ Generative UI (alpha) ◆ Assistant Tools APIs ◆ Mistral, Azure, Perplexity, and Gemini support vercel.link/ai-sdk-3

AI Server Components: A new rendering model for AI-native web applications

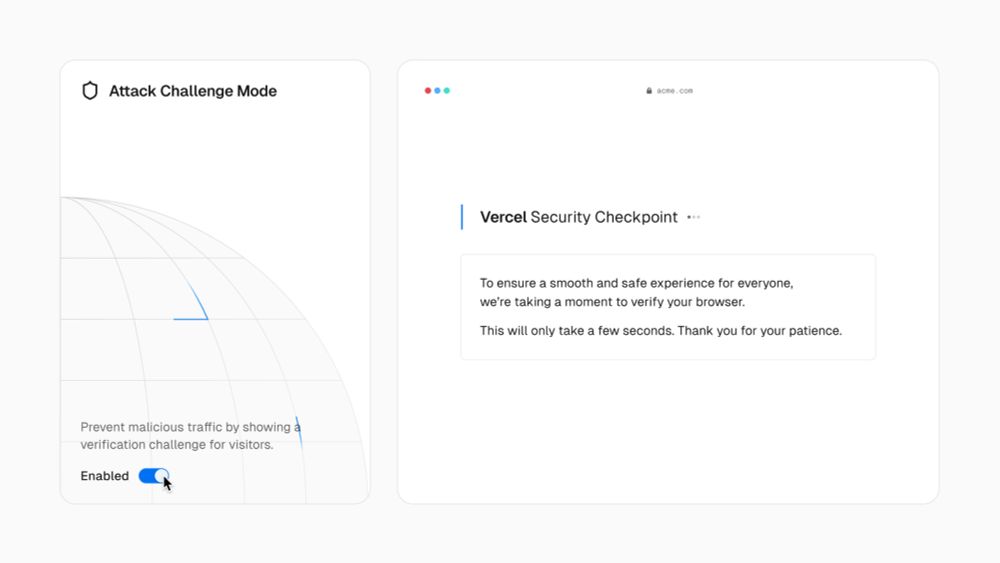

Introducing Attack Challenge Mode. An extra layer of defense for DDoS and malicious traffic, free for all plans. When enabled, the Vercel Firewall will serve a challenge page to help mitigate attacks. vercel.com/changelog/pr...

Announcing Vercel Ship 2024. Join us May 23 in NYC or online. vercel.com/ship

Join us on May 23rd to get a first look at new tools and powerful features centered on scale, reliability, and speed.

Vercel Functions: Faster, modern, and more scalable ◆ Up to 100,000 concurrent invocations ◆ Web `Request` and `Response` APIs ◆ Zero-configuration streaming ◆ Run functions up to 5 minutes ◆ Faster cold starts ◆ Automatic regional failover vercel.com/blog/evolvin...

Our first major iteration of Vercel Functions with increased concurrency, longer durations, faster cold starts, streaming, and more.

Introducing AI Integrations on Vercel ◆ Perplexity ◆ Replicate ◆ Pinecone ◆ Modal ◆ Fal ◆ LMNT ◆ Together ◆ ElevenLabs ◆ Anyscale vercel.com/blog/ai-inte...

AI Integrations on Vercel