»[O]ur results do not mean that AI is not a threat at all« emphasized Iryna Gurevych. »[But future research should] focus on other risks posed by the models, such as their potential to be used to generate fake news.« (3/🧵) Full press release: nachrichten.idw-online.de/2024/08/12/i...#ACL2024NLP

The 2024 study, authored by Sheng Lu, Irina Bigoulaeva, Rachneet Sachdeva, Harish Tayyar Madabushi & Iryna Gurevych (BathNLP Lab | UKP Lab), was just presented at #ACL2024NLParxiv.org/abs/2309.01809

Large language models, comprising billions of parameters and pre-trained on extensive web-scale corpora, have been claimed to acquire certain capabilities without having been specifically trained...

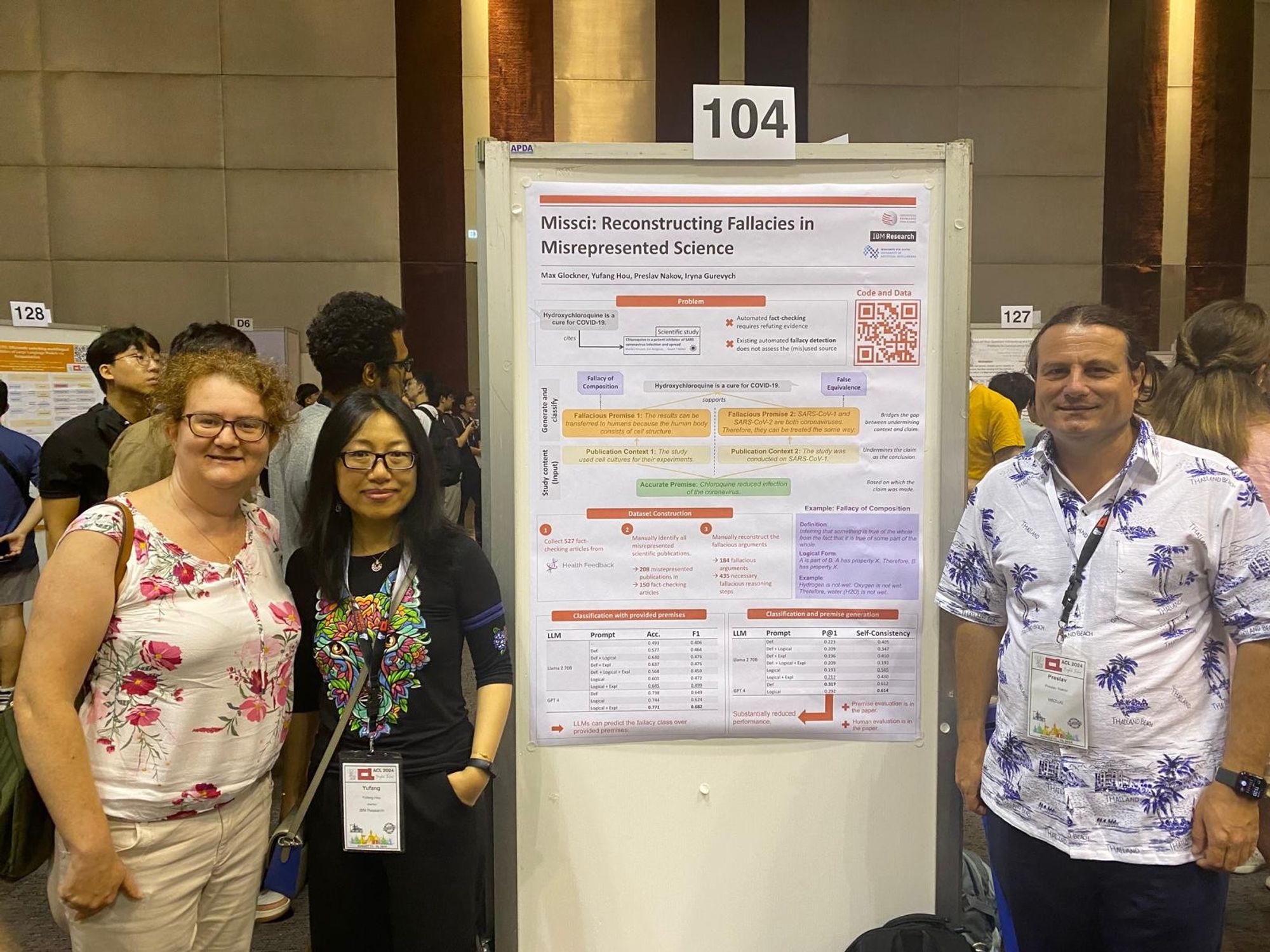

Our colleagues Iryna Gurevych, Yufang Hou and Preslav Nakov presenting the work of Max Glockner on #Missci#ACL2024NLParxiv.org/abs/2406.03181

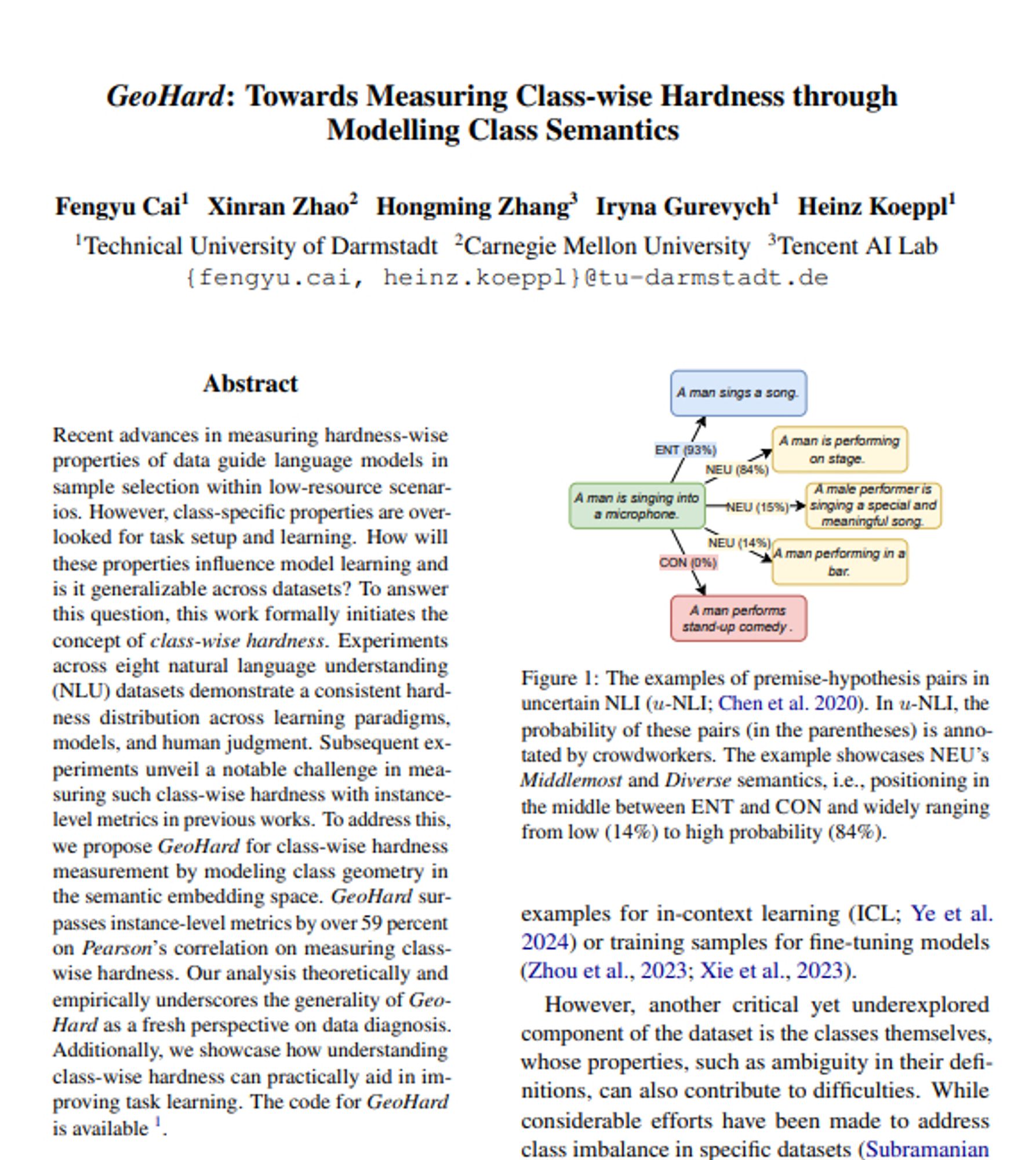

And consider following the authors Fengyu Cai (UKP Lab), Xinran Zhao (Carnegie Mellon University), Hongming Zhang (Tencent AI), Iryna Gurevych, and Heinz Koeppl (@cs-tudarmstadt.bsky.social@tuda.bsky.social#ACL2024NLP 🇹🇭!

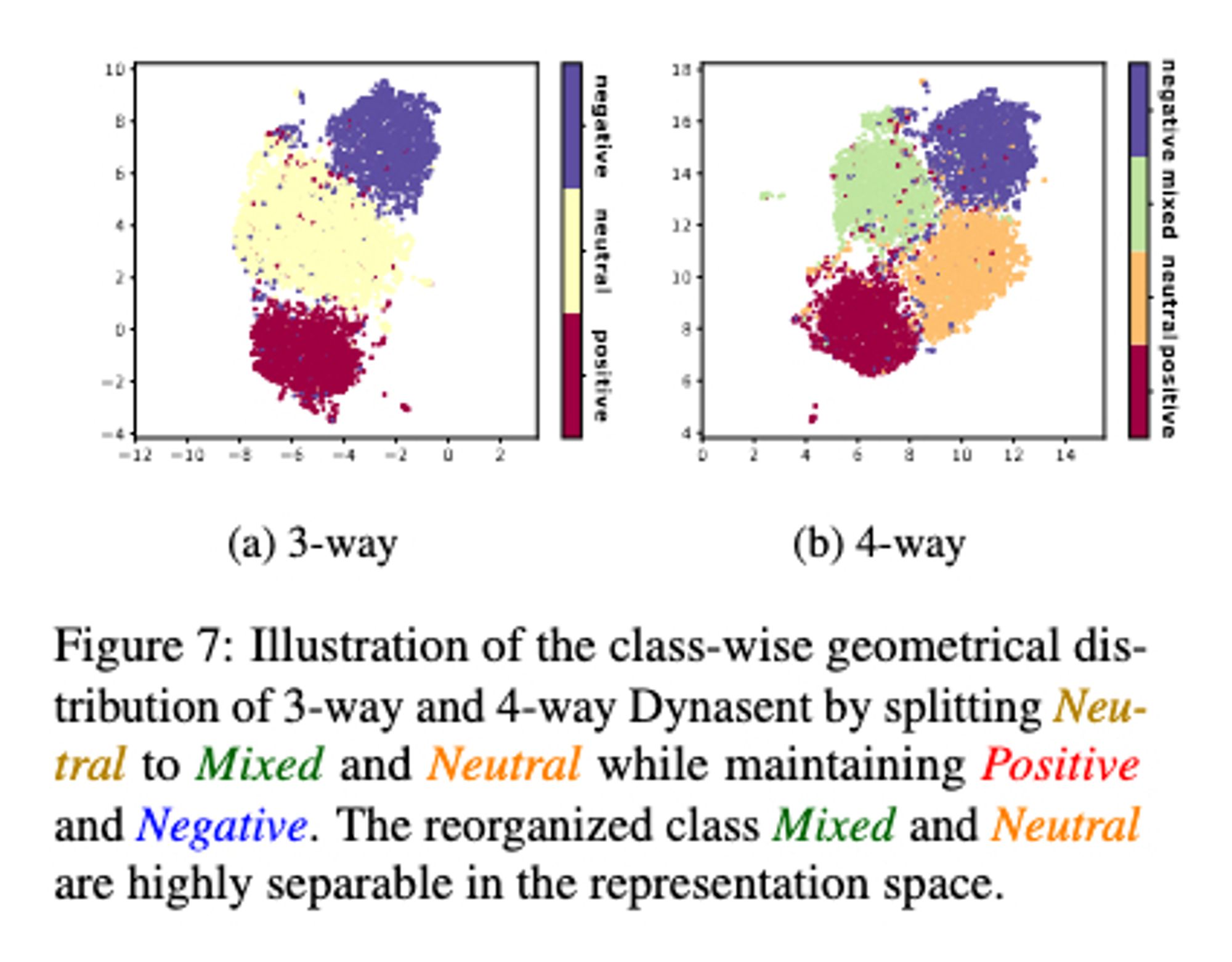

We demonstrate that with the knowledge of class-wise hardness, class reorganization will lead to a more coherent class-wise hardness distribution, and further improve the model performance. (7/🧵) #ACL2024NLP#NLProc

☝️ Moreover, we theoretically prove that the intra-class hardness is associated with overfitting phenomena, leading to performance degradation in the training process. (6/🧵) #ACL2024NLP#NLProc