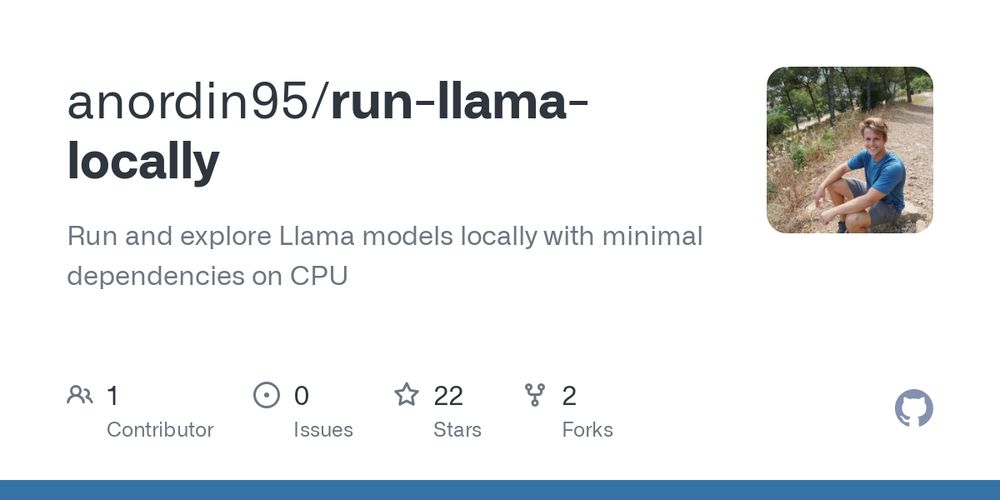

Run Llama locally with only PyTorch on CPU (@github.comMain LinkDiscussion

Run Llama locally with only PyTorch on CPU (@github.comMain LinkDiscussion

Wanchao Liang, Tianyu Liu, Less Wright, Will Constable, Andrew Gu, Chien-Chin Huang, Iris Zhang, Wei Feng, Howard Huang, Junjie Wang, Sanket Purandare, Gokul Nadathur, Str... TorchTitan: One-stop PyTorch native solution for production ready LLM pre-training https://arxiv.org/abs/2410.06511

Second, the properties of our new energy function and the connection to the self-attention mechanism of transformer networks is shown. Finally, we introduce and explain a new PyTorch layer (Hopfield layer), which is built on the insights of our work. ml-jku.github.io/hopfield-lay...

apple needs to hire a couple of developers to get the extended pytorch universe to make use of their fancy chips.

pytorchはchainerからすごい影響を受けてますねぇ

I'm pretty sure I know where it is, when the queuing server gets a req from the firehose consumer it creates a worker which instantiates Pytorch and loads the ML model, and the way it spawns means that it loads model from disk every time. I have no idea how to fix that, I am not good at Python lol.