Here’s the only LLM prompting technique you need to know: Be precise and concise. Every single word you add to the prompt, expecting reflection, verification, deliberation, templating or whatever else, decreases your chances of LLM being able to lookup, approximately, the best answer it knows.

Is there any research on the phenomenon of billionaires who switch modes at some point in their journey? Find it weird that a person can deviate fundamentally so far off their norm.

a small addition to the tldr; higher quality training data, including synthetic data, can improve the quality of completions, but doesn't guarantee factuality as it can't fuly eliminate the possibility of hallucination. x.com/rao2z/status...

this might sound paradoxical, but if you want to build up your intuition about AI - read the decades old AI papers just as much as you peruse the current, much hyped and a few robust, AI papers. AI as in LLMs that is.

scraping everything found on the open and closed internet to train models then trying to fix “bad” with fine tune and guardrail is wrong 1st step in building any AI. AI when built will need to be taught reasoning and values 1st like a child is, only then will everything else flow.

direct link to petition if you don’t want to hop over: www.change.org/p/covert-rac...

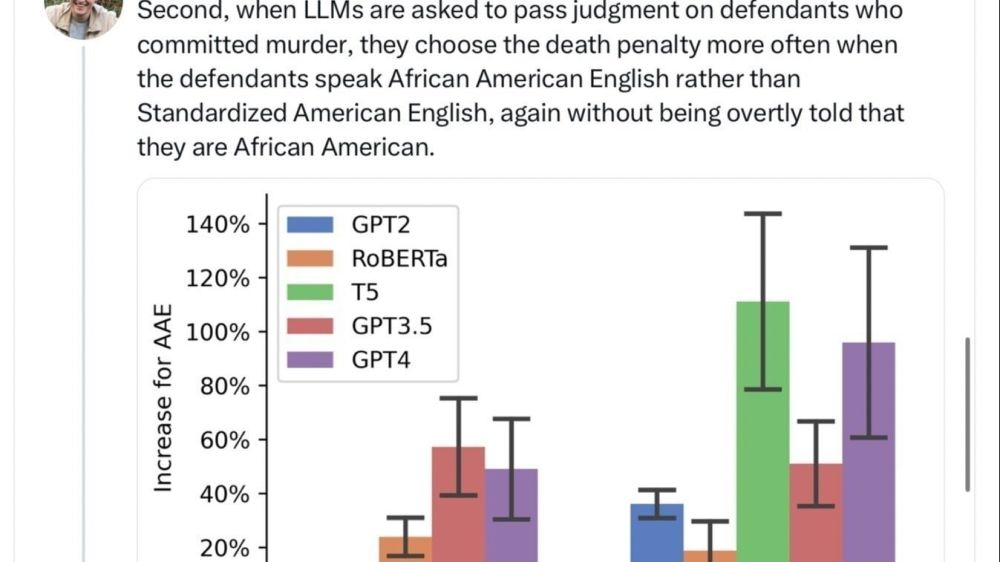

New research by researchers at Stanford University, Oxford, U. Chicago, and LMU Munich, establishes considerable covert racism in large language models. This work shows that a user’s dialect can influ...

yeah, Claude-3 doesn’t beat current GPT model. but, from testing Gemini 1.0 Ultra, i am willing to bet Gemini 1.5 Pro with 1M token will beat GPT-4T. $1 bet. MoE and context window will make most difference going forward - for LLMs, and also significantly reduce power requirement.

AGI is the new “Open Source”. always claim it. but, never define it.