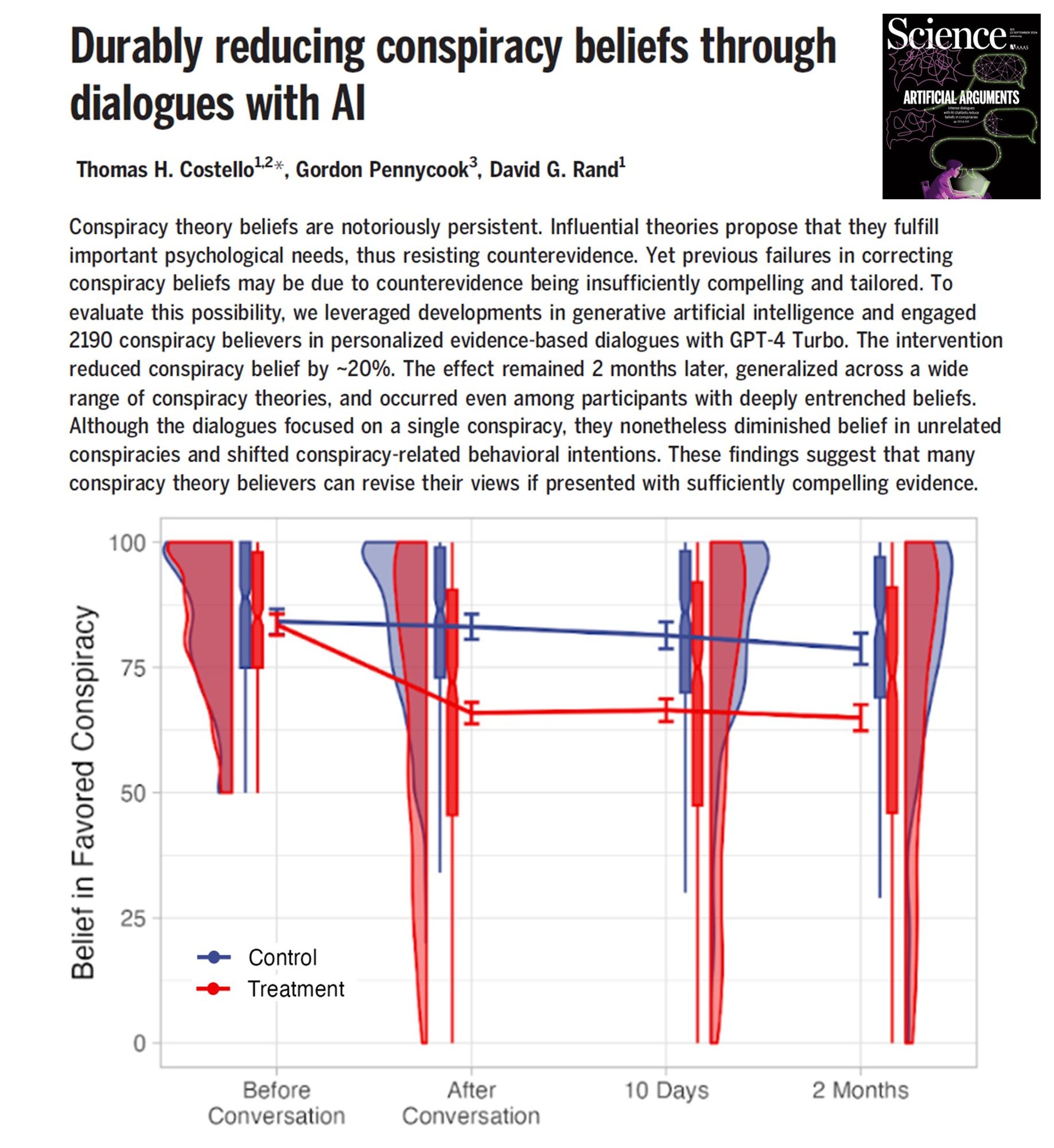

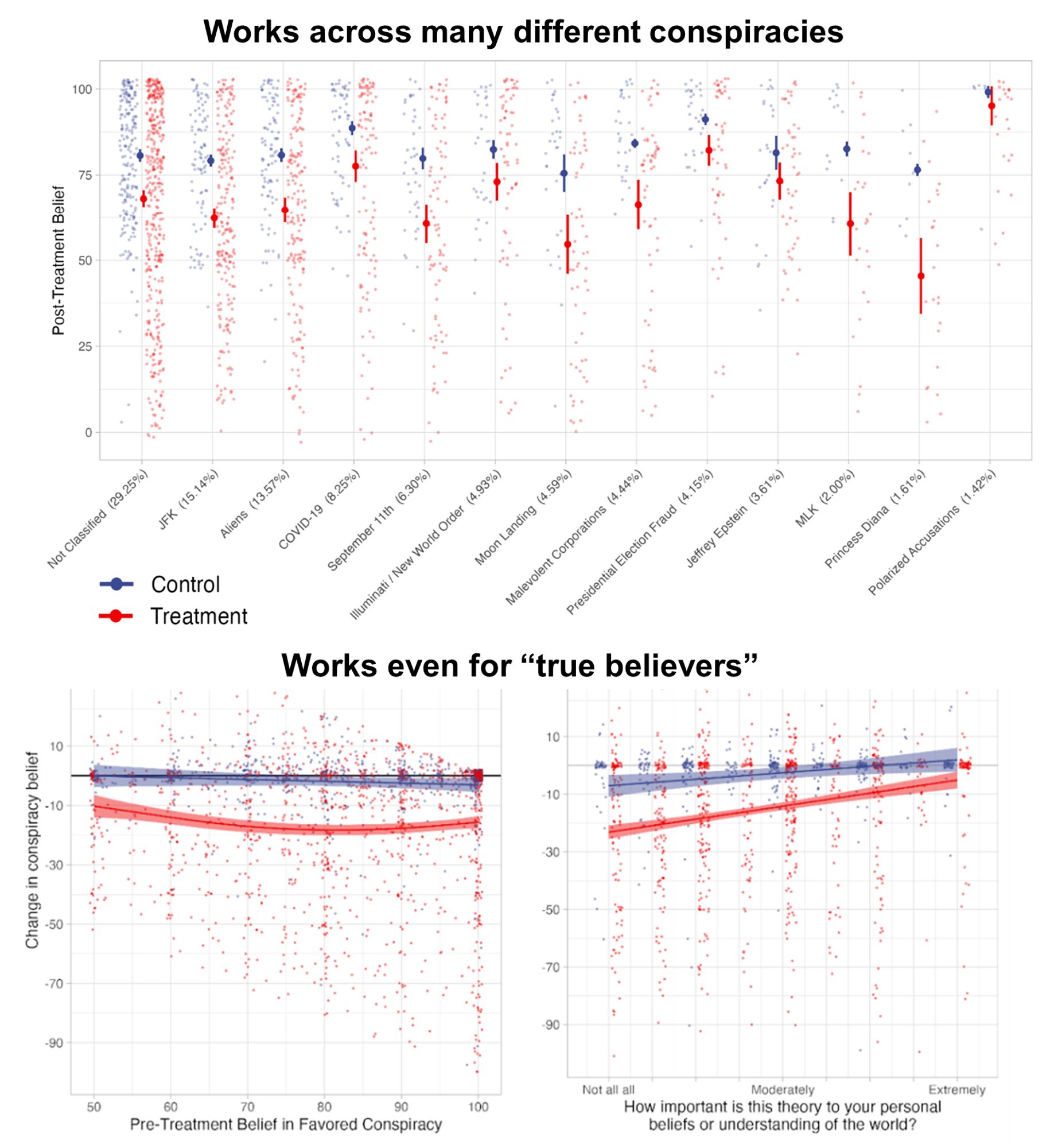

🚨Out in Science!🚨 Conspiracy beliefs famously resist correction, ya? WRONG: We show brief convos w GPT4 reduce conspiracy beliefs by ~20%! -Lasts over 2mo -Works on entrenched beliefs -Tailored AI response rebuts specific evidence offered by believers www.science.org/doi/10.1126/...

AI for conspiracy theory to be developed by the extreme right in 3,2,1...

If I read this right, you did not check to see whether participants had switched to CT's *outside* your list of "15 widespread CT's".

Apologies if I missed this in the paper, but could the effects be due to a higher level of trust in AI than people? For example, suppose you did a "reverse Wizard of Oz" study, where you told participants they were chatting with a human, but in fact it was AI. Do you think you'd see the same result?

Concerns noted p.12 -- or “Who will debunk the debunkbots?” Argues for use of personal agents that loyally serve the user (Richard Whitt), much like social media middleware agents and related social processes as I propose. ucm.teleshuttle.com/2023/11/a-ne...papers.ssrn.com/sol3/papers.....

What I would do for a qualitative component? Just absolutely fascinating.

Would you expect a 20% reduction for other types of (non-conspiracy) beliefs?

I mean, means most still keep holding onto what they already believed, but still interesting that because it´s more personal (in response) but also less personal (not directly to a person)...

Super neat! Will be this weekends reading. Thank you!

This is so neat! I worry a bit about the opposite being true as well, in terms of convincing people of conspiracies, but that snippet in the chat is so interesting.

Very brief read through, but this is fascinating work.