First episode of our new podcast is out! Episode 1: "What is Intelligence?" It's hard to fully answer this question, but we had some great discussions about it with superstars Alison Gopnik and John Krakauer. Give it a listen! complexity.simplecast.com/episodes/nat...

Never thought I would be a podcast host, but... I'm co-hosting this season's Complexity Podcast from @sfiscience.bsky.socialcomplexity.simplecast.com/episodes/tra...

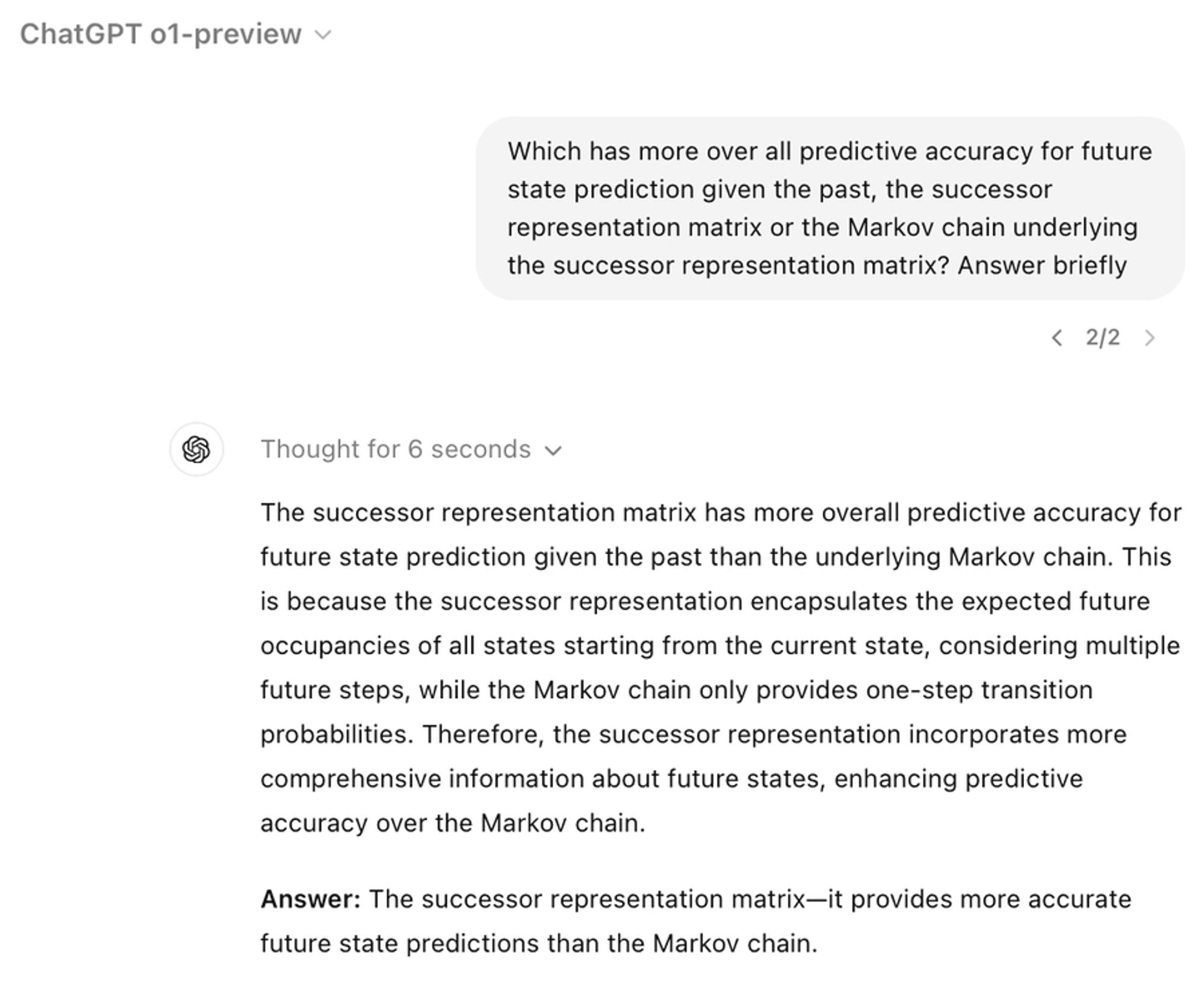

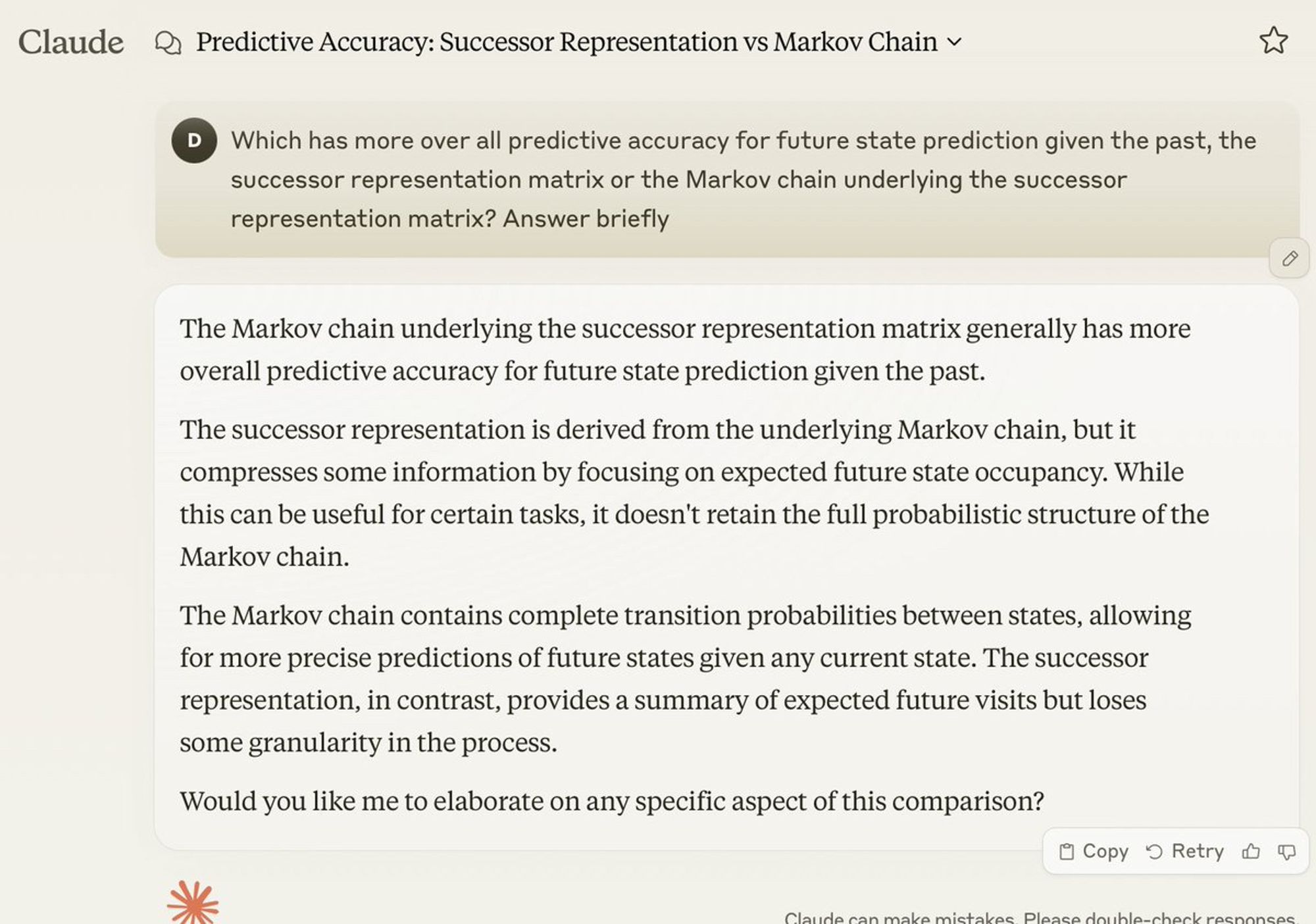

It's not just you who's confused about successor representations (SR), advanced chatbots are too, and I definitely don't blame them. They just need to read my blog: blog.dileeplearning.com/p/a-critique...

Next topic …where is transformer attention in the brain? 😛😇

Today's Q for discussion by the #NeuroAI crowd: In-context learning (i.e. learning from a few examples sans synaptic updates) is a major advantage of large AI models: arxiv.org/abs/2301.00234 Do we have clear evidence of rapid learning with no synaptic changes in the brain? If so, when/where?

With the increasing capabilities of large language models (LLMs), in-context learning (ICL) has emerged as a new paradigm for natural language processing (NLP), where LLMs make predictions based...

Ok, so ICL in transformer also includes synaptic changes in “working memory” …the darned prompt has to be stored somewhere. Just think of the context buffer…someone has to write the prompt into it.

Had a really fun chat w/ Mike Levin, Nick Cheney, & Ben Hartl on evolution, development, and the idea of the genome instantiating a generative model of the organism www.youtube.com/watch?v=6QaM...

YouTube video by Michael Levin's Academic Content

Good...you'll get the neuroscience equivalent of this #AGIComics

Here's the meta-point: If you are going to poll a wide (and bit chaotic, and pre-paradigm) field like neuroscience to check "Is progress is being made"...you are going to be disappointed. A consensus, "here's how", is not going to come out. I just don't know what point you want to make out of it.

How much neuroscience do you actually read @garymarcus.bsky.social ? Do you think you’ll be able to recognize connective tissue work before it catches on?

If you have looked into models of hippocampus, you have encountered successor representations -- but probably in a way that ascribes properties to it that it doesn't have. Maybe this blog will offer some clarity. blog.dileeplearning.com/p/a-critique...

Successor representations (SR) is a popular, influential, and often cited model of place cells in the hippocampus.