GPT: Decoder is all you need. (Also, pre-training + finetuning 💪) https://openai.com/research/language-unsupervised

Our paper club recently revisited some of the earlier language modeling papers. Here's a one-liner for each. --- Attention: Query, Key, and Value are all you need* *Also position embeddings, multiple heads, feed-forward layers, skip-connections, etc https://arxiv.org/abs/1706.03762

Latte turned 2 today and got a free peanut butter paw from the bake shop. She couldn’t resist and started drooling while posing for a photo 🤤

It was their 2-year-old birthday party so naturally there were pup cups. All the pups were very well behaved while waiting for noms. 😍

Latte (left) and her brother, Katsu (right). Its amazing how similar they are in personality yet so different in appearances.

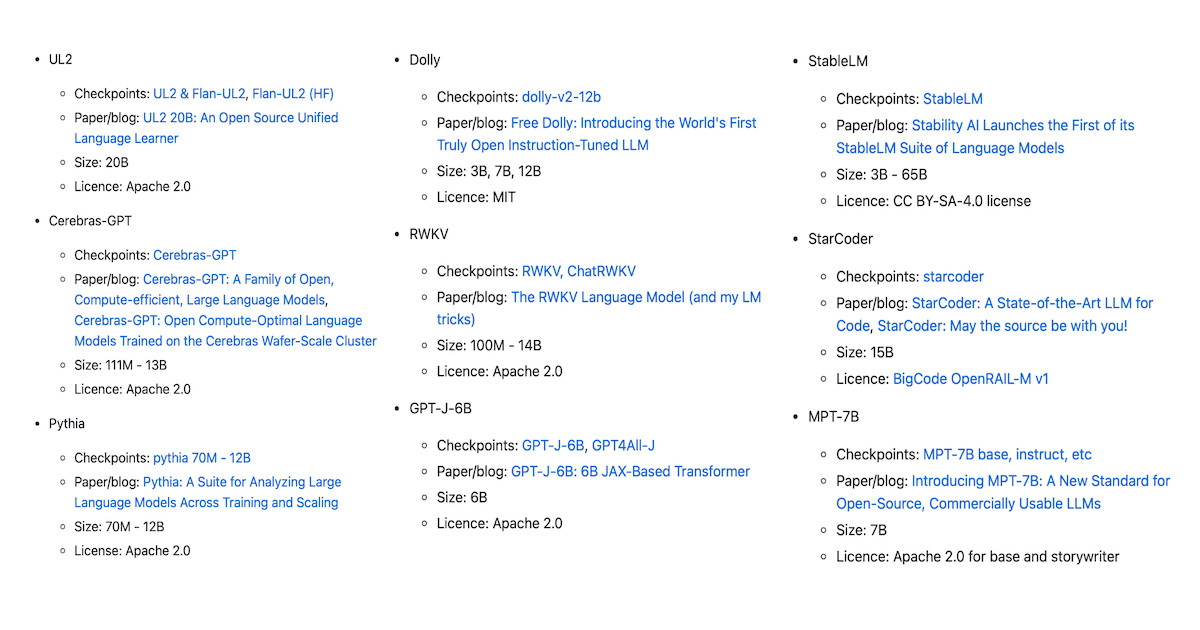

Started a list of open-source LLMs with commercial licenses so you can fine-tune your own applications. Contributions welcome! https://github.com/eugeneyan/open-llms

Welcome to Bluesky @karlhigley.bsky.social! 👋

Specifically around open large language models? Will 👀