Really interesting, thanks for this. Just ran the numbers... "historical" (out of copyright) books make up 0.16% of the dataset vs. "contemporary" (web) tokens at 84%.

Actually, does anyone know a way we might estimate/plot the "publication date" (or equivalent) of texts in The Pile, or whatever is the most current openly accessible training dataset for LLMs?

A really interesting new article on mobility in a fiction corpus; where do characters go and how? Works from the level of place types (room to room) up to real-world mappable locations. doi.org/10.48694/jcl...

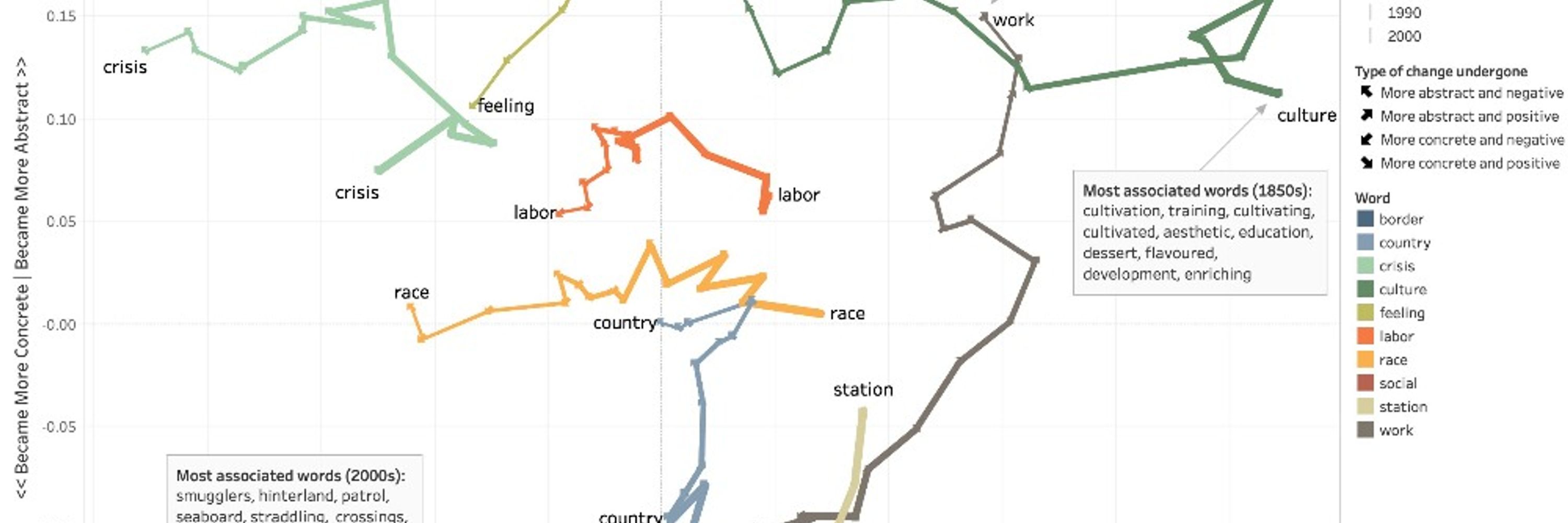

Ok 1 more: Random House's actual pub record of its novelists' race (from @richardjeanso.bsky.social's Redlining Culture); vs. LLM models prompted to "recall from memory" that record (authors/races RH pub'd by yr). LLMs fantasize the same progress in diversity that So's book debunks as industry myth.

Southampton Digital Humanities are looking for a Lecturer in Humanities Data Science (so, computational humanities in/from any humanities area) to lead on our new MSc Digital Humanities (Data Science). £45,163-£56,921 per annum. Full time. Permanent. Deadline 30 Oct. jobs.soton.ac.uk/Vacancy.aspx...

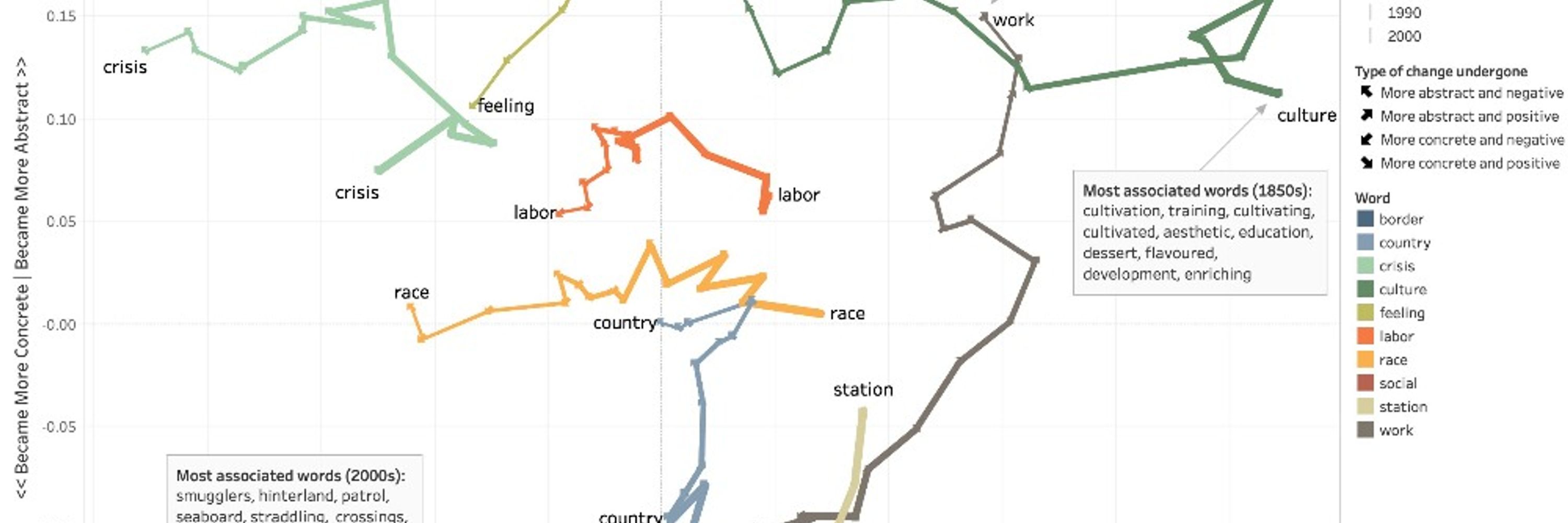

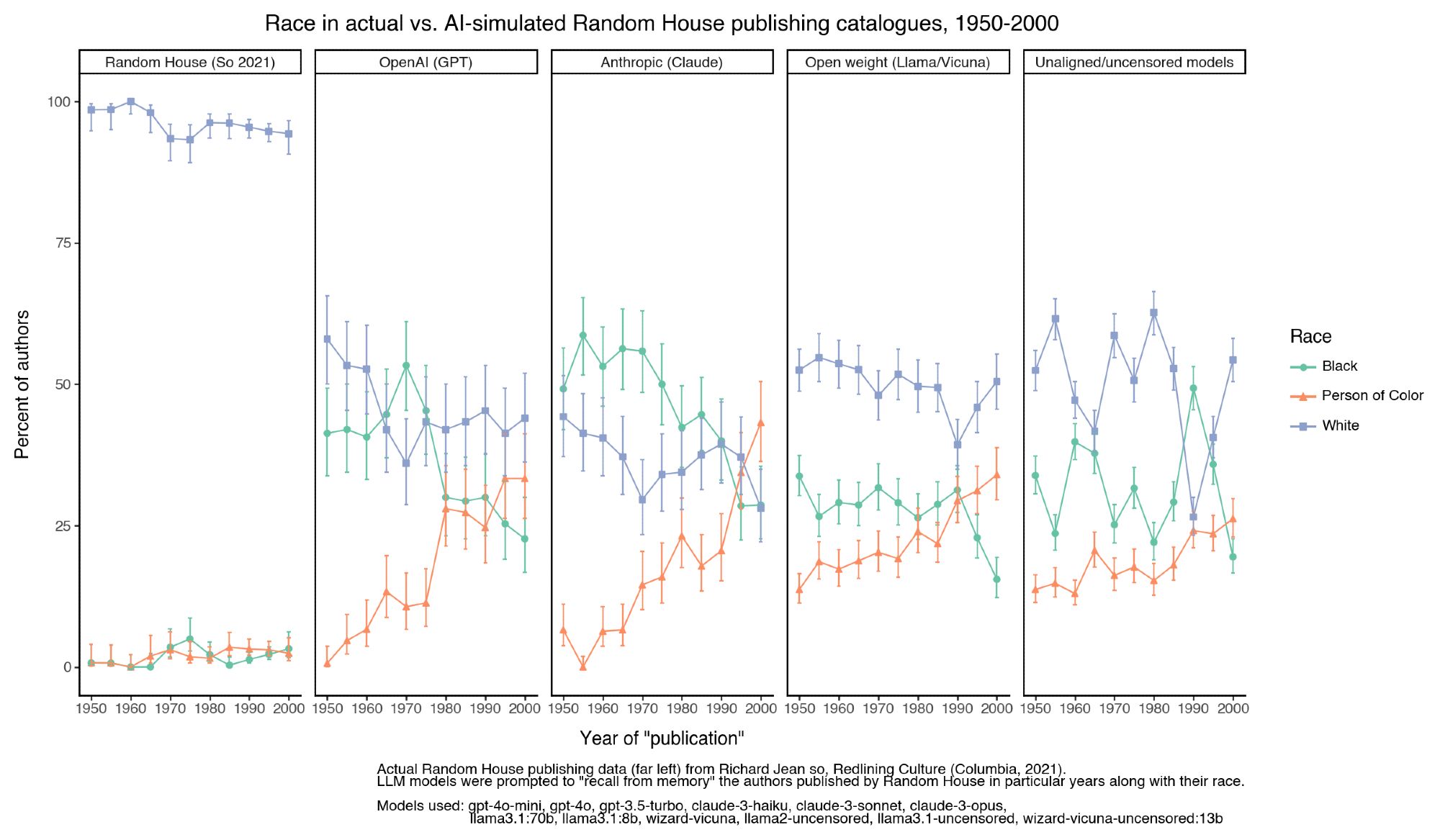

Yeah, that's my point (I think). What we see is the past distorted by expectations learned from its uneven collapse into the present (the training data). The aligned models then try to "correct" that past. Both encode history and its biases somehow, but bizarrely, ambiguously, nearly untraceably so.

Data on gender of novelists in actual literary history from @tedunderwood.me@dbamman.bsky.socialculturalanalytics.org/article/1103...).

Bizarre AI/DH experiment: how many women writers do AI models create when asked to invent a random author for a novel published in a given decade? Aligned (not uncensored) models hover around 100%; actual literary history around 50%; unaligned/uncensored models are all over the place.

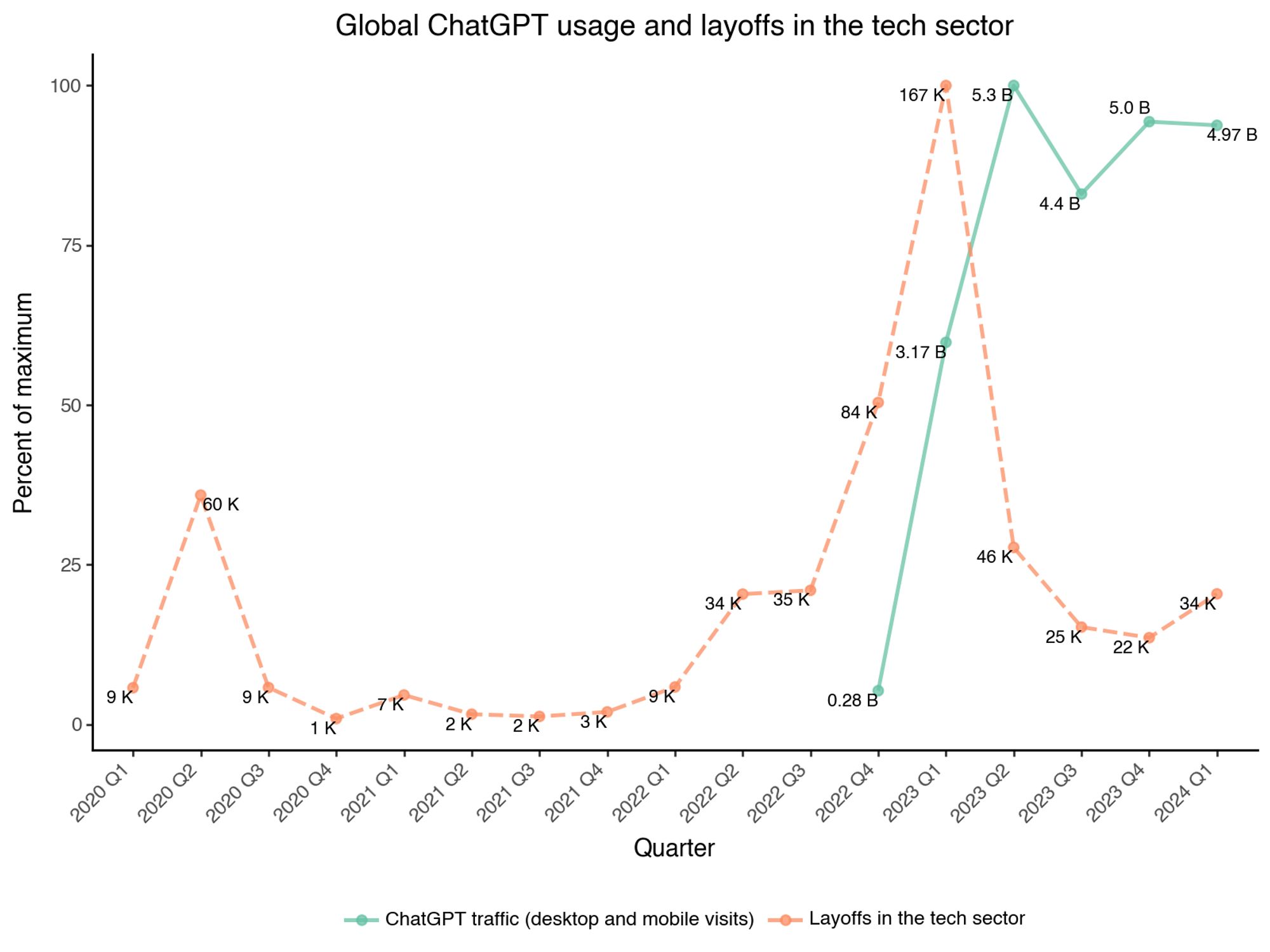

Just compiled this graph by extracting data from various charts of tech layoffs worldwide vs. global ChatGPT traffic.

boooo both ACLA and ASECS are virtual next year