No need to manually gather and compare benchmark data! BenchBench provides a centralized platform with a curated database and standardized methodology for effortless benchmark agreement testing. You can also use them with our package here: github.com/IBM/BenchBench

A package dedicated for running benchmark agreement testing - IBM/benchbench

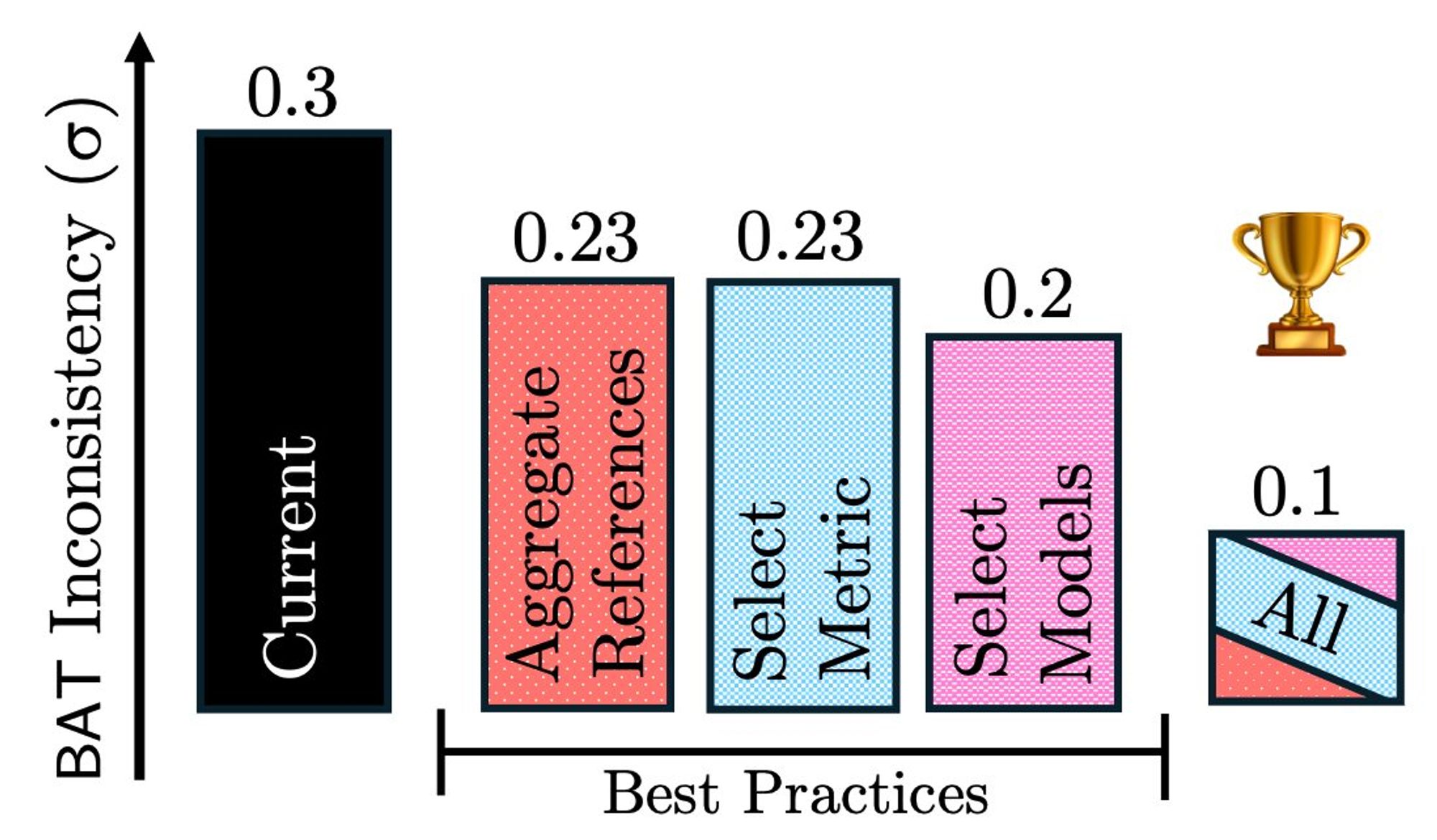

Currently, benchmark comparisons are often ad-hoc and inconsistent making results untrustworthy and benchmark choice 🤮 BenchBench & our findings: www.alphaxiv.org/abs/2407.13696 offer standard and transparent comparisons to reduce variance and increase confidence in your evaluations!🎉

People claim to only trust chatbot arena!🤖 This is impossible though, cheaper datasets give the same scores... So, what if you don't have an army of annotators at your disposal? 🤔 www.alphaxiv.org/pdf/2407.13696huggingface.co/spaces/ibm/b...#ML#evaluation#llm#llms#machinelearning#data#data

Discover amazing ML apps made by the community

Yes, we totally agree here (well you put it extremely, but in essence)

Please ask us anything, share, discuss and talk to us, we are going to make it real! Together! Much much more in the paper: alphaxiv.org/abs/2408.16961

Human feedback on conversations with language language models (LLMs) is central to how these systems learn about the world, improve their capabilities, and are steered toward desirable and safe behavi...

The feedback from all models will be open and collected in one pool, helping beyond the specialized models created to future research and general improvement

We believe a successful ecosystem must center around feedback loops where anyone can spin up a community model, for storytelling, Bengali or anything else Others can use it, give feedback, and benefit from a model that keeps improving with the contributions

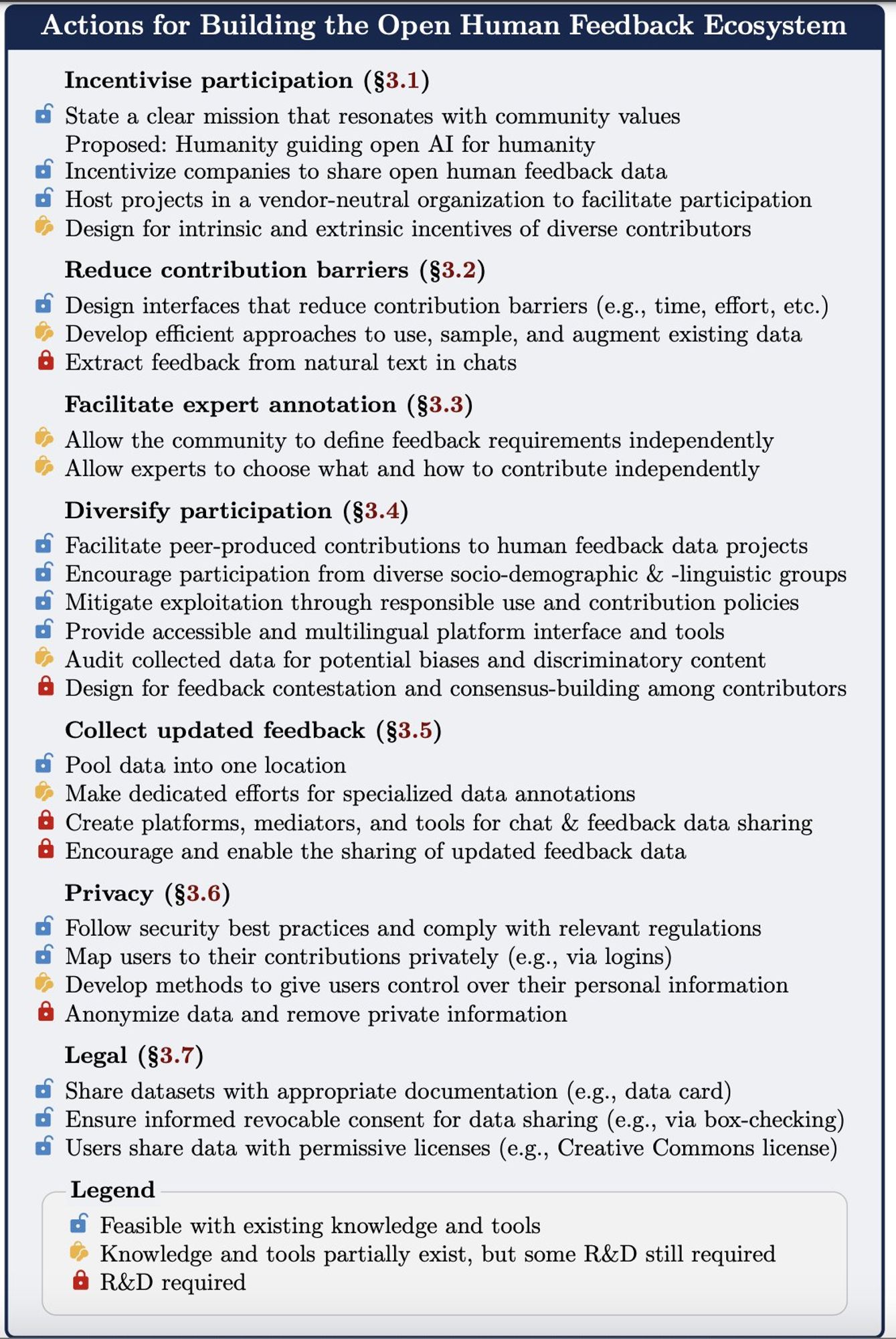

Then, we hone on 6 crucial areas to develop open human feedback ecosystems: Incentives to contribute, reducing contribution efforts, getting expert and diverse feedback, ongoing dynamic feedback, privacy and legal issues.

In our paper, we first learn from peer production efforts like wiki and stack overflow. These case studies tell us how important it is to align incentives of different bodies, allow the community to dictate the policies, etc.

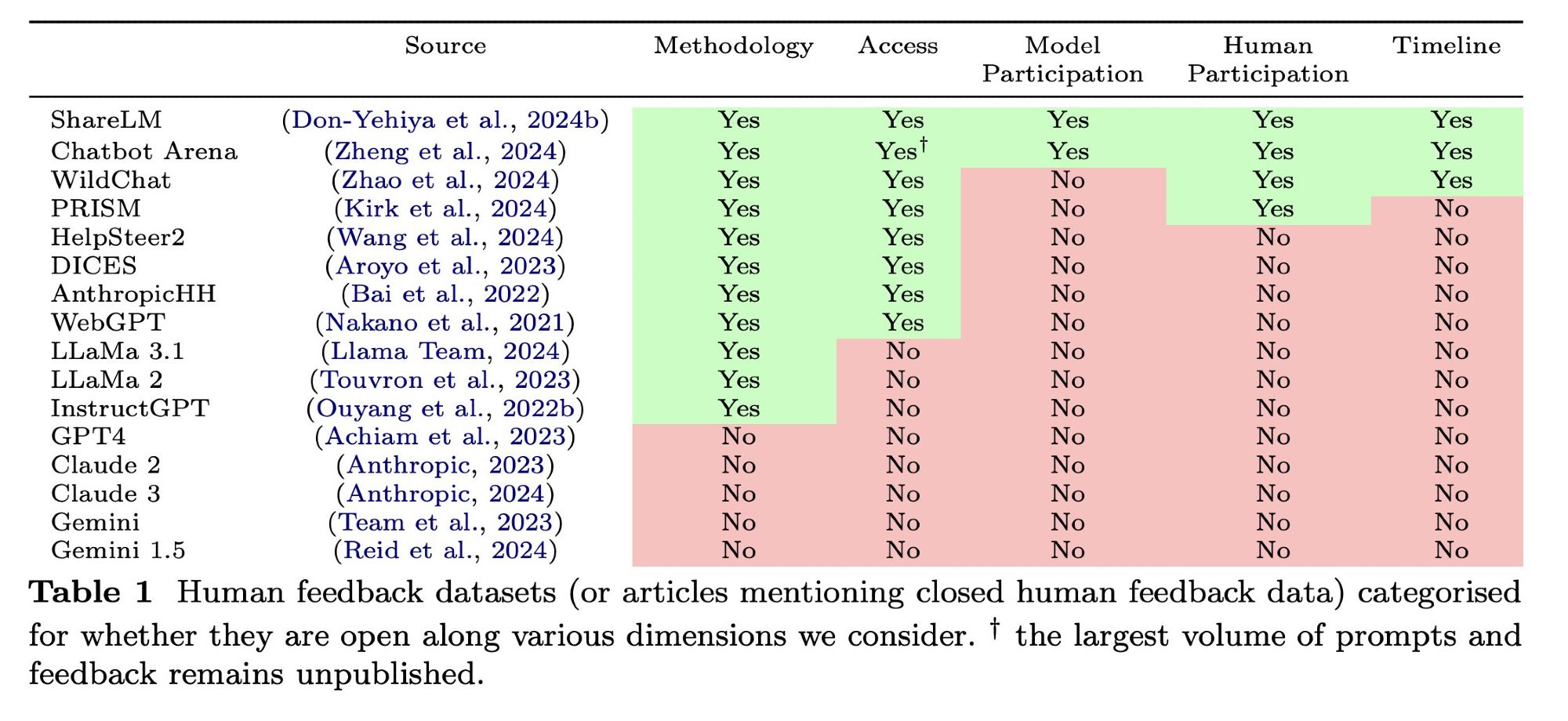

We define 5 axes of openness: Methodology (how its collected) Access (who can use it) Models (one\many) Contributors (as diverse as its uses?) Time (keeps updating? closed models improve over several feedback iterations, and of course, models change) Is current feedback open?🥶