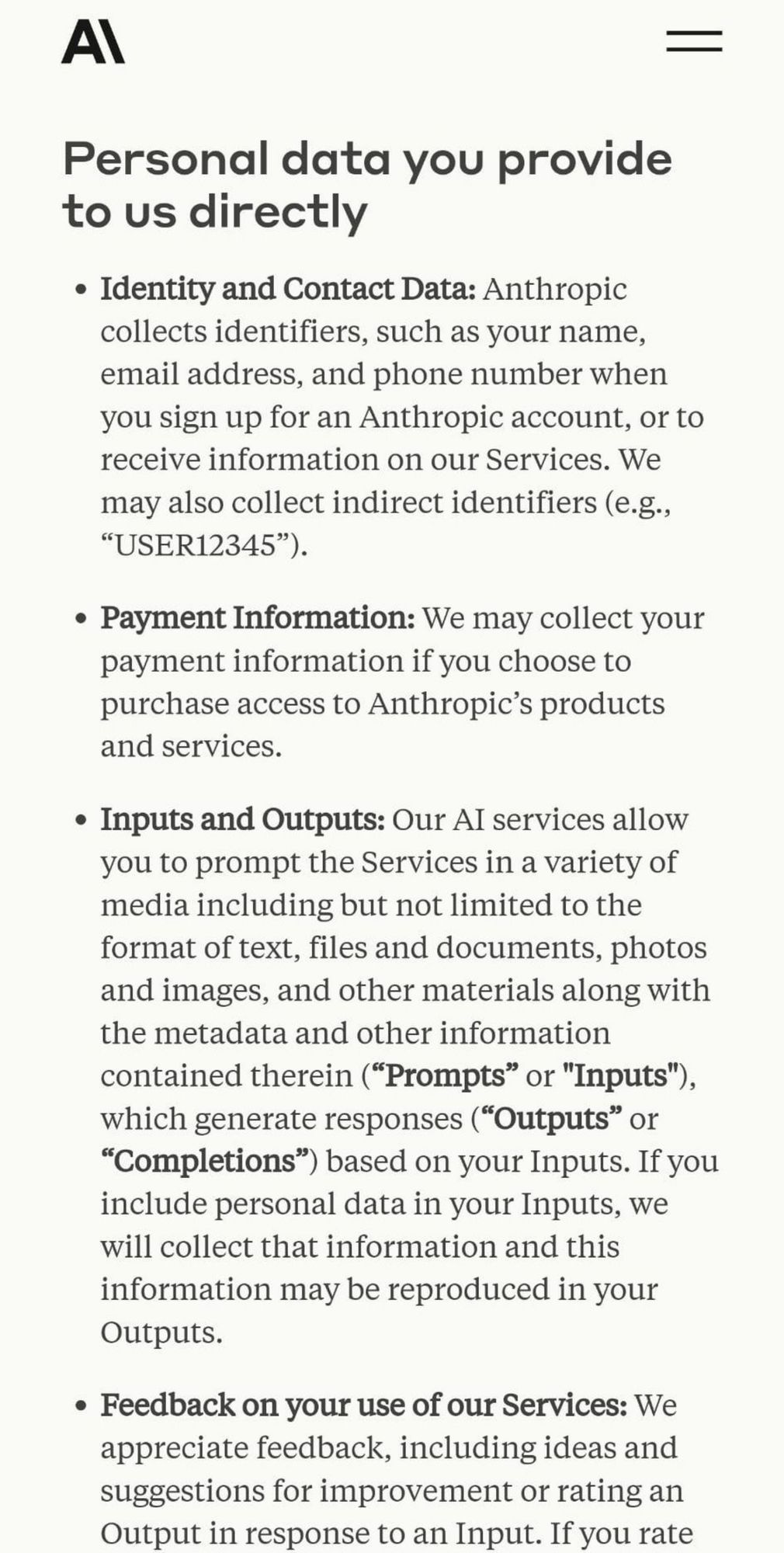

Anthropic now admits using personal data. they updated their policy yesterday, which is now basically the same as OpenAIs

One thing I've learned covering Tesla is that a lot of people have this very deeply-ingrained but also deeply wrong bias towards believing that tech companies have a fundamental respect for the environment that industrial companies don't. The environmental impact of AI is really exposing this myth.

“If you are willing to construe generative models as knowledge producers, as truth-tellers, despite the obvious leaps of illogic it requires to think that, it must be because you really want to believe in the power to indoctrinate.” Always read @robhorning.bsky.social.

Google recently released an generative model called Gemini but had to suspend its image-generation capabilities because of how it was programmed to address brand safety concerns. In part because of ho...

Some news: Google is trimming jobs in its trust and safety group, just as the company is also asking those employees to be on standby over the weekend in order to troubleshoot problematic Gemini outputs www.bloomberg.com/news/article...

The layoffs, part of a broader staff reduction, come amid backlash over AI chatbot Gemini

This is the final boss for Spotify not paying artists.

Users can edit the AI-generated music clips with text as well, increasing intensity or extending the length of the clip.

Is it too speculative to imagine that if teachers use copilots as pedagogic pesonalized learning assistants in class, and kids encounter bad content or bomb a test, that parents will make a fuss blaming the school, and teachers will blame the AI, and the companies will blame the kids?

When gen A.I. responds in harmful ways, companies first response is that users manipulated the bots, and I wonder how long they’ll be able to get away with that excuse.

Microsoft Corp. said it’s investigating reports that its Copilot chatbot is generating responses that users have called bizarre, disturbing and, in some cases, harmful.

Microsoft, the most valuable company in the world, is blaming its users for Copilot’s unsafe output. www.bloomberg.com/news/article...

Microsoft Corp. said it’s investigating reports that its Copilot chatbot is generating responses that users have called bizarre, disturbing and, in some cases, harmful.

Rob Horning’s latest essay on gen ai resonates: The purpose of gen ai is “the ability to force other people to see a particular version of reality. It is a tool of power that masquerades as a tool of knowledge.”

No evidence to tie fake images, including one created by Florida radio host, to Trump campaign, BBC Panorama investigation finds