To appreciate how clueless we probably are about the effects of AI, remember that Neuromancer thought cyberspace was going to be used for darkly grand heists. It took us another 20-30 years to understand that cyberspace was transformational mostly because of cat videos and Great Dismal tweets.

But can your Rivian handle the melee attacks of the Byzantine Emperor’s Catalan mercenaries? I don't think so.

You gotta get this Tesla Cybertruck, bro. It’s great. Am I a paid spokesperson? Sort of. I’m a person who paid $8 a month to “spokes” for them. Thi...

Timing, eh?

Just pushed my latest newsletter, with some OpenAI Keynote coupled with Microsoft's Ignite, a dash of prompt engineering, and a cautious tale to end the day.

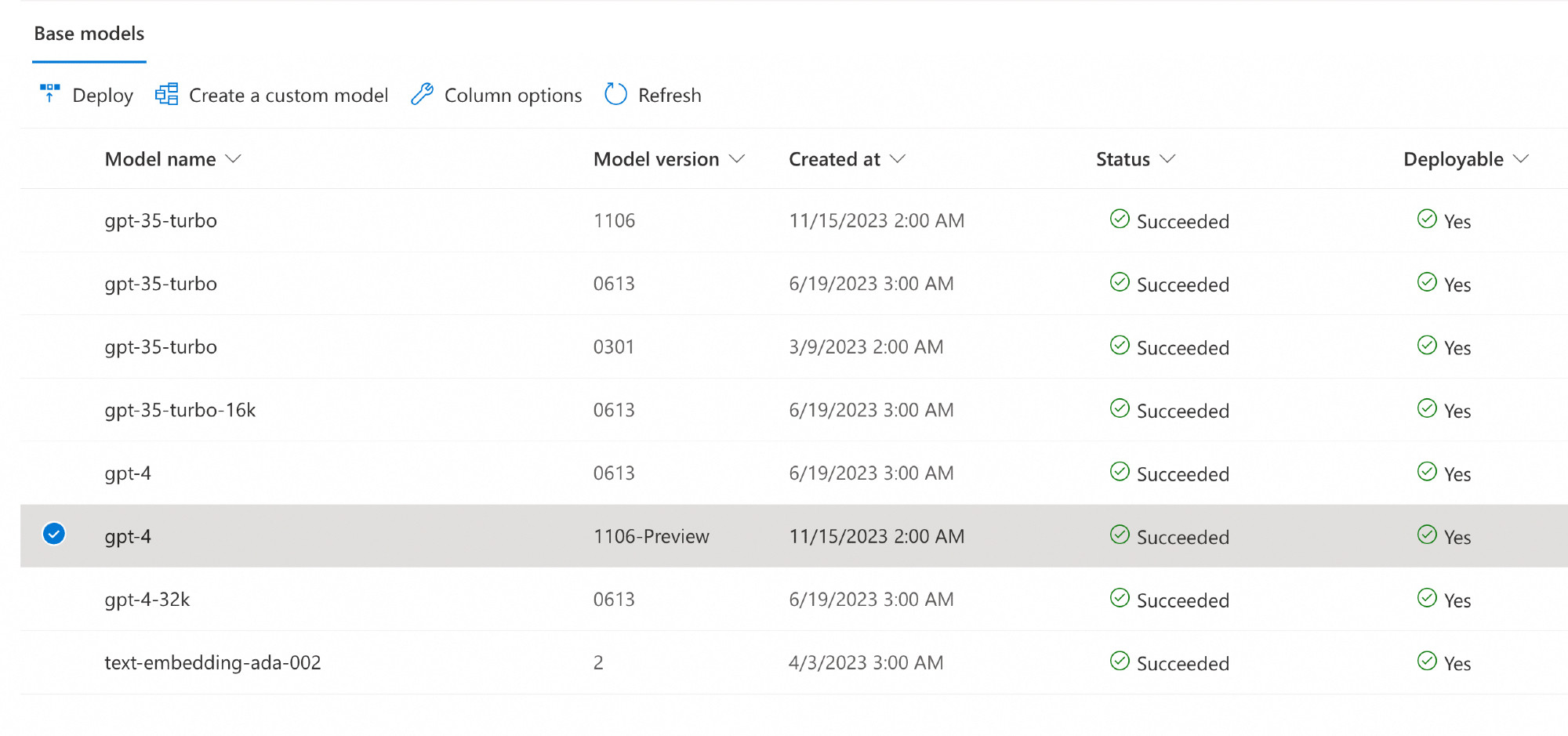

Hi friends, and welcome to a rather packed edition of this here newsletter. Lots and lots of interesting things going on apparently! Here goes 🚀 First things first: the OpenAI DevDay Keynote. I wat...

Yo dawg, I heard you like OpenAI. So I took the OpenAI DevDay Keynote, and transcribed it with Whisper, summarized it with GPT-4 Turbo, rendered images illustrating the main points with DALL-E 3, and narrated the whole thing with OpenAI's TTS Available here: www.youtube.com/watch?v=zOgm...

I heard you like OpenAI, so I used:- OpenAI's Whisper to transcribe the OpenAI DevDay Keynote, - OpenAI GPT-4 Turbo to summarize the transcript, come up with...

So let me get this straight, GPT-4-Turbo has 128k tokens context window, but you can only send it 10k tokens a minute?!? That's...quite something.

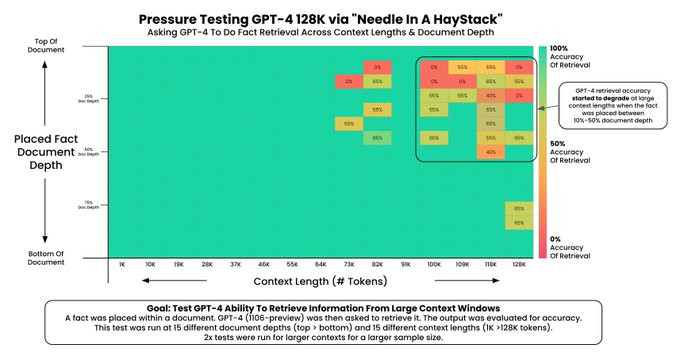

Greg Kamradt spent $200 to test the new 128,000-token context length on GPT-4. Can it remember a fact buried in tens of thousands of words of prose? The answer: yes, up to about 64k tokens. Beyond that, it matters where you placed the fact in the prompt. link to thread: twitter.com/GregKamradt/...

Cartoon: Death and Dream Rabbit. I guess I might be just pandering now ;) but they are such a fun couple to draw. Enjoy!