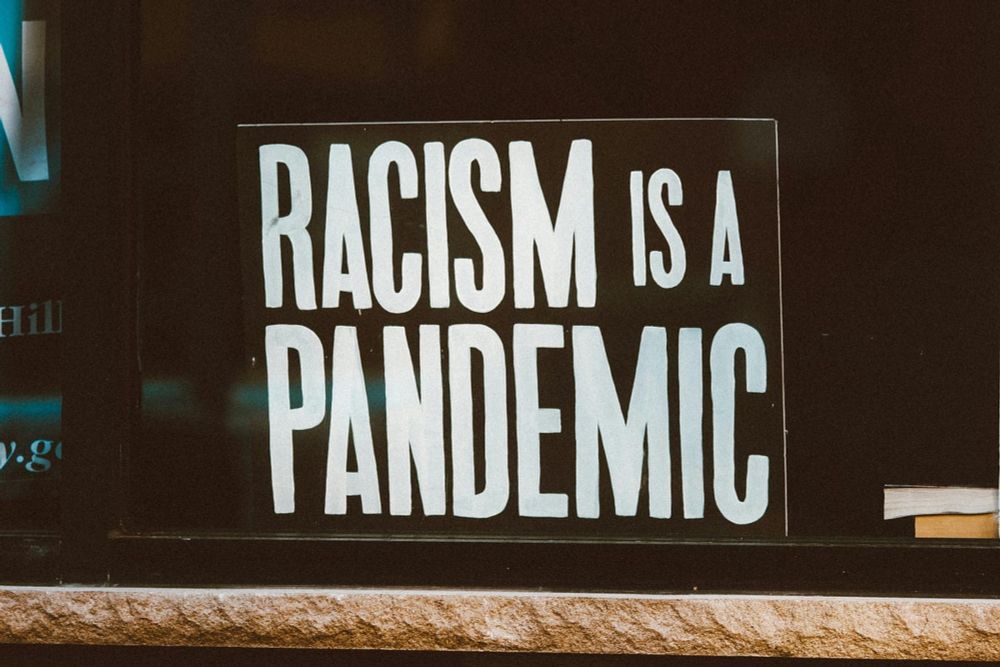

Breaking the Digital Echo: Navigating the Complexities of Social Media Bubbles bit.ly/3ZuExok#EchoChamber#SocialMedia#Polarisation#AlgorithmicBias#DigitalDiversity

Are your social media feeds a mirror of your own beliefs? Explore the world of digital echo chambers and discover how social networking…

In honor of the #ADA’s#AlgorithmicBiascdt.org/insights/rep...

This report was also authored by Bonnielin Swenor, Director of the Johns Hopkins Disability Health Research Center. Introduction When people with disabilities interact with technologies, there is a risk that they will face discriminatory impacts in several important and high-stakes contexts, like employment, benefits, and healthcare. For example, many jobs use automated employment decision tools […]

Fairness in AI can't be fully automated: - EU's non-discrimination laws rely on context and judicial interpretation, not easily automated. - Algorithmic bias differs from human bias, lacking clear signals. arxiv.org/abs/2005.05906#AI#AlgorithmicBias

This article identifies a critical incompatibility between European notions of discrimination and existing statistical measures of fairness. First, we review the evidential requirements to bring a...

How Tech Disrupted State Services netopia.eu/how-tech-dis...netopia.eu/how-tech-dis...#algorithmicbias#backend#underseacable#Universalaccess#InternetExchangePoints#DigitalSovereignty#DigitalSovereignty

The future of the internet: Netopia takes its departure point in human rights and a broad perspective on society’s digital evolution.

medium.com/ai-news/lift...#AIbias#ChatGPT#GeminiAI#AIPrejudice#TechEthics#AlgorithmicBias#MachineLearning#EthicalAI#TechBias#DataEthics#AIWorldVision

The heightened advancements, in AI (artificial intelligence) has accelerated its presence across all domains. Nevertheless, the escalating…

www.theguardian.com/australia-ne...#algorithm#ai#artificialintelligence#bias#algorithmicbias#discrimination#refugees

Security Risk Assessment Tool – or SRAT – and similar algorithmic tools condemned as ‘abusive’ and ‘unscientific’

GPT 3.5 tests reveal concerning biases favoring certain groups solely based on names during job screening and ranking processes. Addressing algorithmic bias is crucial for fair and inclusive hiring practices. #AlgorithmicBias#FairHiringwww.reddit.com/r/martechnew...

Automating Automaticity: How the Context of Human Choice Affects the Extent of #AlgorithmicBias https://bfi.uchicago.edu/wp-content/uploads/2023/02/BFI_WP_2023-19.pdf automatic behavior seems to lead to more biased algorithms: …users report more automatic behavior when scrolling … 1/3

Der ultimative Beweis, dass KI keine Ahnung hat. Ich meine, DIE Torte ... #algorithmicbias#miasanmiawww.bing.com/images/creat...