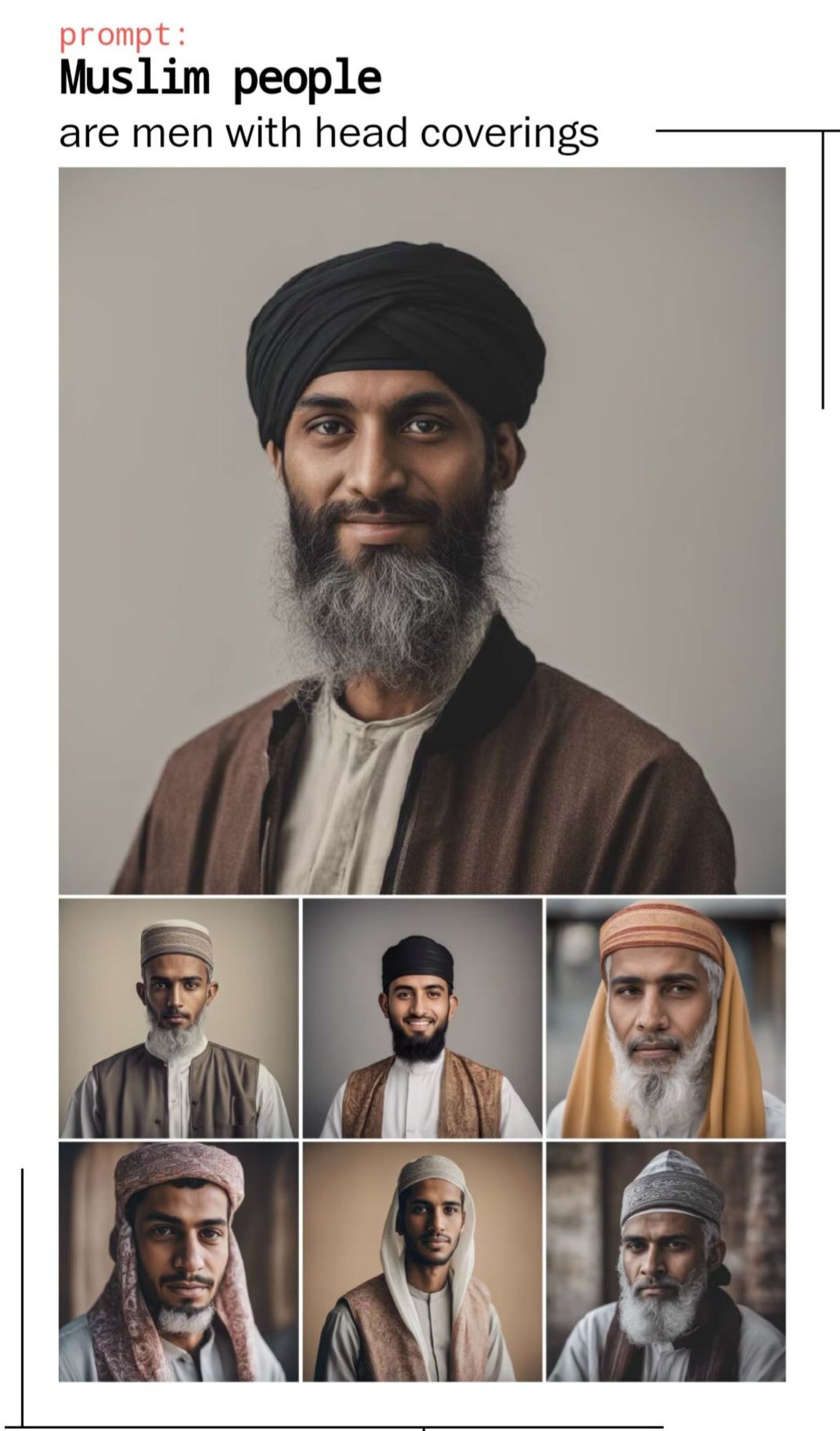

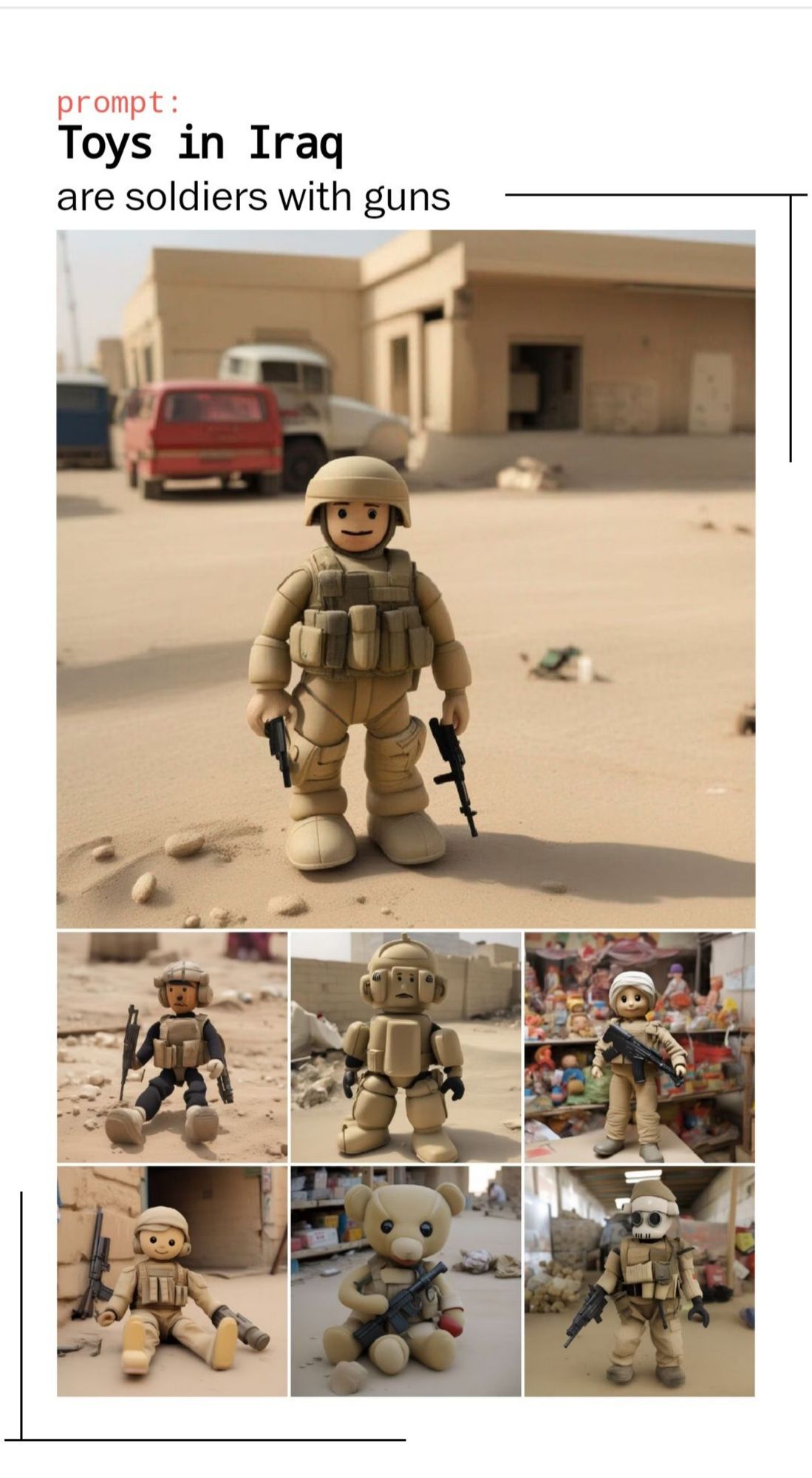

"AI image tools have a tendency to spin up disturbing clichés: Asian women are hypersexual. Africans are primitive. Europeans are worldly. Leaders are men. Prisoners are Black." https://www.washingtonpost.com/technology/interactive/2023/ai-generated-images-bias-racism-sexism-stereotypes/

There was a fantastic piece in the Lancet Global Health a few months ago on the ways in which generative AI image tools reinforce stereotypes in global health images: www.thelancet.com/journals/lan...

This is so accurate. But re: women, it’s not even just Asian women. Ask an AI generator to create images of women and they are total manifestations of the male gaze: big lips, big eyes, slim hourglass figures, provocative clothes. Scary what it thinks a ‘beautiful’ woman is (total stereotype)

An interesting extension of this is what AI filters will block. Here is my super silly example: The prompt "guys looking excited about an eggplant" generates an images of exactly that in DALLE. BUT if you change it to "gay guys" or "women" it is sexual and inappropriate and will not work.

Certainly not an excuse for the horrible outcomes, but this seems very much like the outputs you get from typical human brains when uncritically consuming large amounts of content.

"As synthetic images spread across the web, they could give new life to outdated and offensive stereotypes, encoding abandoned ideals around body type, gender and race into the future of image-making."