I don't know what will be possible. I think it's scary to think about a future where AI improves to a point that the future is uncertain. But it's clear that as of now these machines can't do a lot of what makes us human.

What exactly is it that I'm suggesting that you think is insulting?

I agree.

Respectfully, I see a lot of commercial entertainment and not a lot of art in what comes out nowadays. Also, technology has put out of work countless jobs in the past. I don't see how that can be avoided. That said, I agree that a computer can't relay the human experience... (Yet?)

Assuming it one day writes good ones (which is not a given, sure), why would you care if it's been written by a computer?

Many stories written by humans are at some level inspired by existing material, and are full of plot holes. A majority of people still enjoy it.

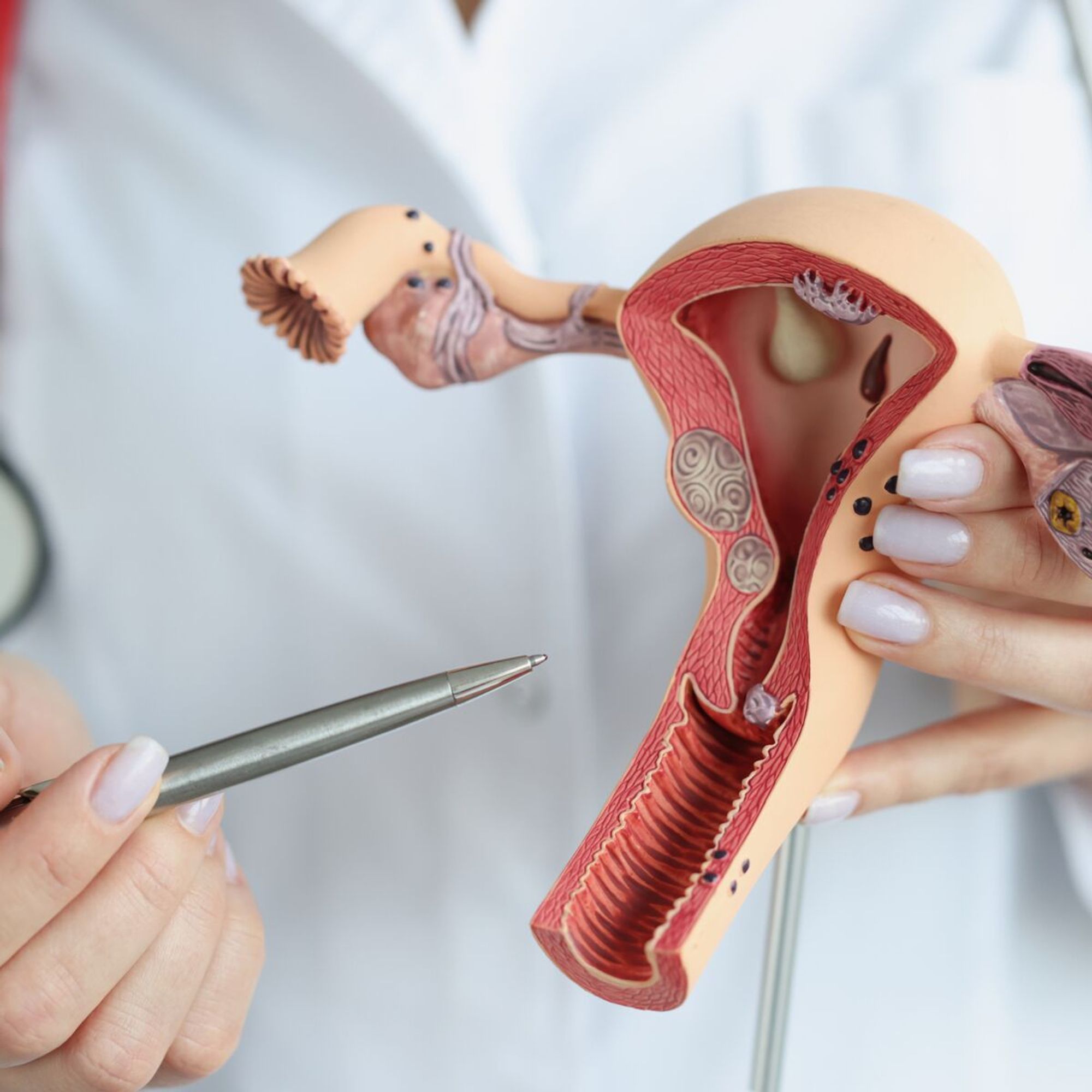

Presque tous les cancers du col de l'utérus (99,7 %) sont causés par une infection par un VPH (Virus du Papillome Humain). Aucun cas de ce cancer n'a été signalé en Écosse chez les femmes nées entre 1988 et 1996 et ayant reçu le vaccin contre le VPH à l’âge de 12-13 ans. 1/2

Chatgpt4 says The sentence "Thiss sentance has ten errors." has three errors: 1. "Thiss" should be "This." 2. "sentance" should be "sentence." 3. The claim of ten errors is incorrect; there are only two. chat.openai.com/share/f242ee...

LLMs are typically trained using so much more data than what is possible to store in their parameter coefficients. There is no "copying from" possible, just combining probabilities to create the reply token by token.

How to prove you don't get how a LLM works without explicitly saying it.