Sure! Thanks for checking beforehand, appreciate it :)

First thing that sprang into my mind when I heard of this prize. I’m sure the Nobel committee has people investigating the true contribution of candidates with cross interviews and such but this is still awkward PR that fuels the unfortunate “lone genius” myth of science

The objective function itself is something I tried to steer away from, which is why it is identical in my models, to fix it so as to abstract it away from the conclusions I agree the “intuition” has limits, even a baby moves with purpose eventually, but learning continues!

1. Hi all: I’m here to advertise our new preprint: www.biorxiv.org/content/10.1...@tyrellturing.bsky.social@glajoie.bsky.social!

Classical psychedelics induce complex visual hallucinations in humans, generating percepts that are coherent at a low level, but which have surreal, dream-like qualities at a high level. While there a...

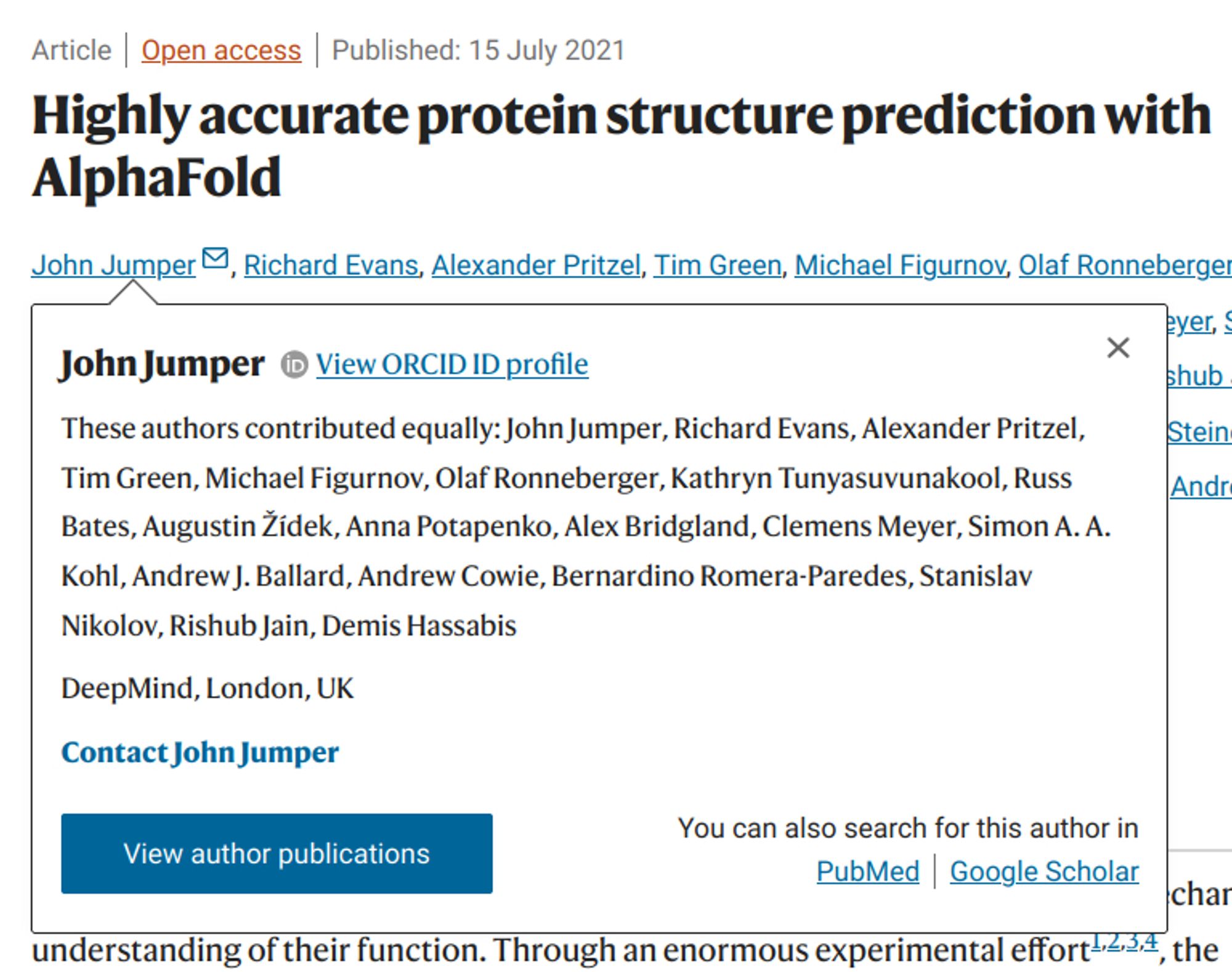

Investigating the experimentally-verifiable impact of different credit assignment mechanisms for learning in the brain is a crucial endeavor for computational neuroscience. Here is our take for motor learnning and the RL/SL question when looking at neural representations in cortex.

Here’s our latest work at @glajoie.bsky.social and @mattperich.bsky.social ‘s labs! Excited to see this out. We used a combination of neural recordings & modelling to show that RL yields neural dynamics closer to biology, with useful continual learning properties. www.biorxiv.org/content/10.1...

During development, neural circuits are shaped continuously as we learn to control our bodies. The ultimate goal of this process is to produce neural dynamics that enable the rich repertoire of behavi...

Check out our new paper led by @oliviercodol.bsky.socialwww.biorxiv.org/content/10.1...#neuroskyence#compneurosky#neuroai

We close up by discussing some of our views on learning and plasticity in cortical structures in the brain. Happy to chat more with anyone thinking about these questions!

Finally, we show that the neural representations produced by RL have stabilization properties when fine-tuning to new environmental dynamics. Unlike supervised learning, this leads to representational reorganization that mirrors cortical plasticity in monkeys.

We tested these results in a biomechanically simplified setting & found that this completely breaks down, underlining that these different neural "solutions" actually depend on a more complex output-state maps. This sheds a new light on the idea of "universal solutions" for neural networks.

We found that models trained with RL aligned much better to monkey data in an matched reaching task. This was true with a crude geometrical metric (CCA) and dynamics metric (DSA) over tasks/datasets, and monkeys.