Looking forward to #CCN20242024.ccneuro.org/poster/?id=122@ptoncompmemlab.bsky.social @DaphnaShohamy for supporting this project! (1/2)

Please retweet! I am excited to share that I am looking for motivated postdocs who want to come and work with me at NYU (please apply here: apply.interfolio.com/145544) (1/3)

How can we efficiently find task representations online that work well for the ongoing task while leveraging relations with other tasks? Our simulations show that episodic memory can help! URL: shorturl.at/tT246 Here’s a summary: 1/n

Generalization to new tasks requires learning of task representations that accurately reflect the similarity structure of the task space. Here, we argue that episodic memory (EM) plays an essential role in this process by stabilizing task representations, thereby supporting the accumulation of structured knowledge. We demonstrate this using a neural network model that infers task representations that minimize the current task's objective function; crucially, the model can retrieve previously encoded task representations from EM and use these to initialize the task inference process. With EM, the model succeeds in learning the underlying task structure; without EM, task representations drift and the network fails to learn the structure. We further show that EM errors can support structure learning by promoting the activation of similar task representations in tasks with similar sensory inputs. Overall, this model provides a novel account of how EM supports the acquisition of structured task representations. ### Competing Interest Statement The authors have declared no competing interest.

Our paper on how blocked training supports learning of multiple schemas, with Andre Beukers, @collinsilvy.bsky.social@gershbrain.bsky.socialrdcu.be/dEeaG#neuroskyence#psychscisky

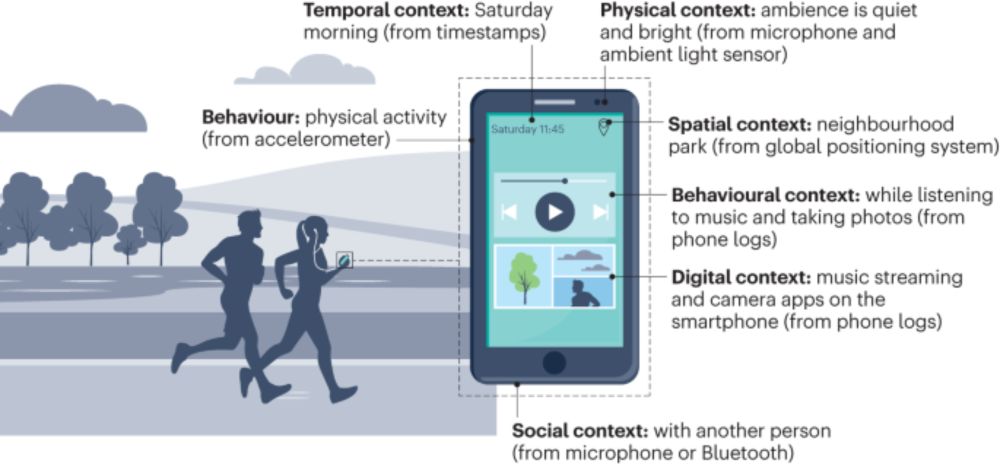

Understanding behaviours in context using mobile sensing www.nature.com/articles/s44...@natrevpsych.bsky.social

Mobile sensing methods can overcome methodological challenges to naturalistic observation and facilitate research about the link between everyday behaviours and psychological constructs. In this Revie...

"Large language models surpass human experts in predicting neuroscience results" w @ken-lxl.bsky.socialbraingpt.orgarxiv.org/abs/2403.03230 1/6

Scientific discoveries often hinge on synthesizing decades of research, a task that potentially outstrips human information processing capacities. Large language models (LLMs) offer a solution....

Very much looking forward to Nicole's presentation about her vision for how to tackle the bench to bedside translation at CCN!

Brain and mind researchers of all types: I hope you'll join this conversation at Cognitive Computational Neuroscience (August 6-9, Boston). I'm envisioning a community-centered conversation unlike any I've seen before; because it's unusual, I unpack it here: www.nicolerust.com/grandplan

New preprint w/ Haley Keglovits & David Badre. lPFC flexibly codes tasks of diff structure. How? We test 2 prevalant ideas 1) it uses a high dim, expressive geometry, agnostic to structure 2) it learns tailored geometries for each structure. tldr - Its 1* www.biorxiv.org/content/10.1...

bioRxiv - the preprint server for biology, operated by Cold Spring Harbor Laboratory, a research and educational institution

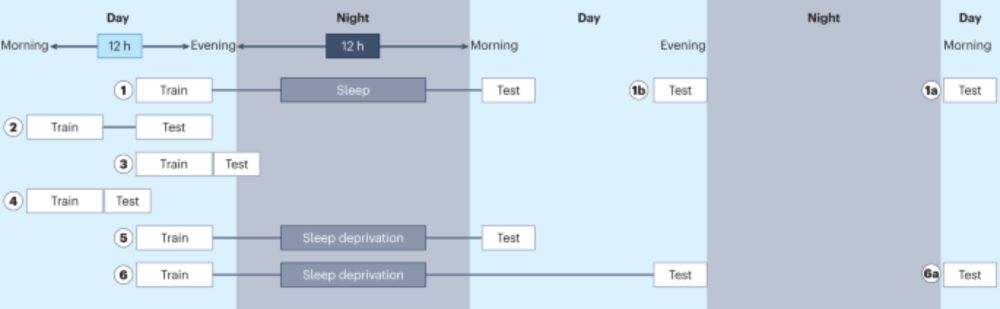

I want to add some new papers for my sleep and memory undergrad seminar this semester. What were your favorite papers in this field (any species, methodology) from the last couple years?

A recent review on methods in sleep and memory is a good one www.nature.com/articles/s44...

Studies of the effect of sleep on learning and memory sometimes reveal conflicting or unreliable results. In this Perspective, Nemeth and colleagues review methodological challenges and make recommend...