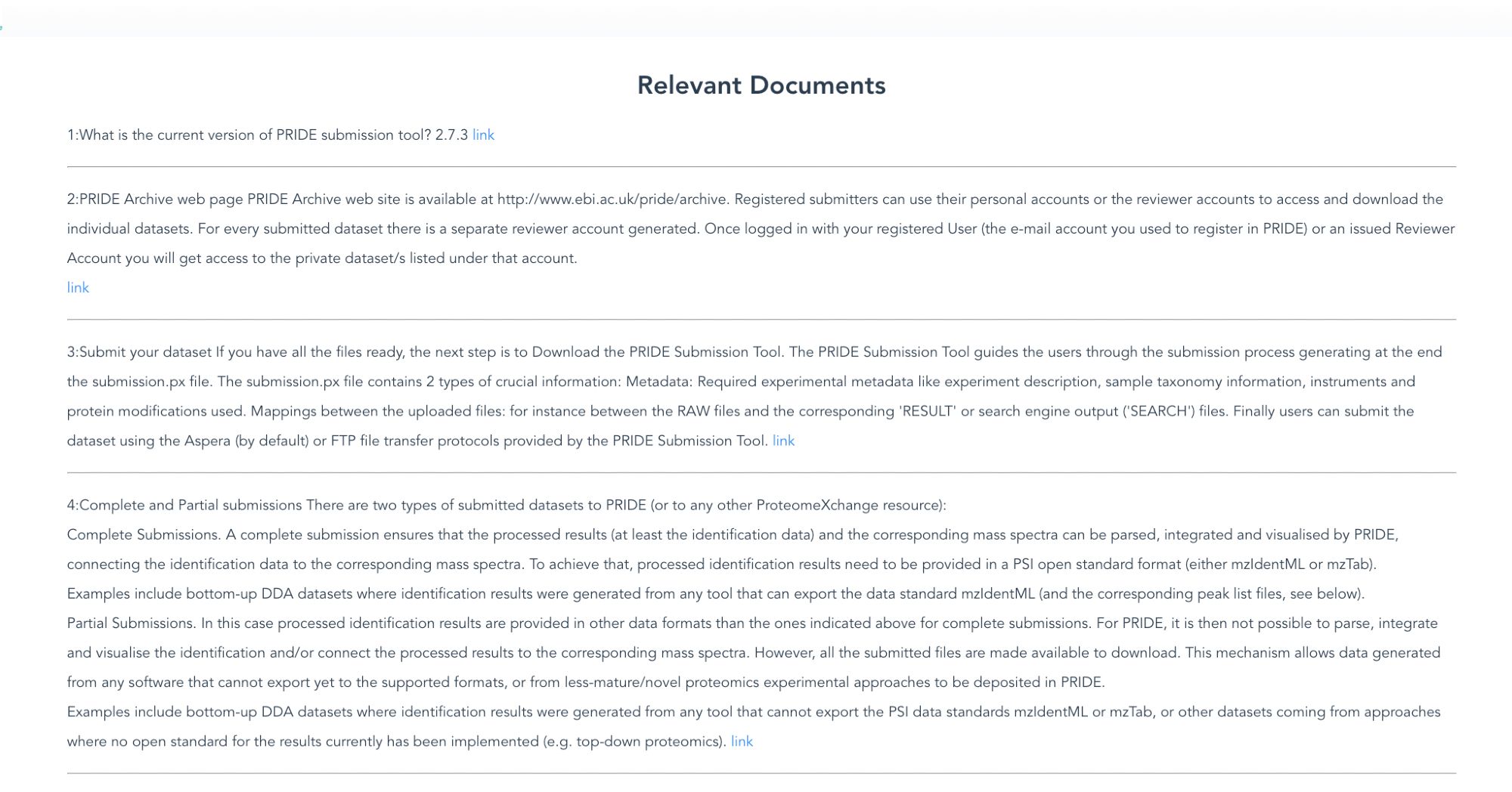

Since the release of the pride chatbot has been released we have seen more than 40 questions well responded to, no need for curator intervention. Now we don't have to reply to questions like: How can I download the entire PRIDE?

Training in bad data only produces bad models. I have seen a lot of deep-learning models in proteomics and almost no research/talks about the data that was used for training.

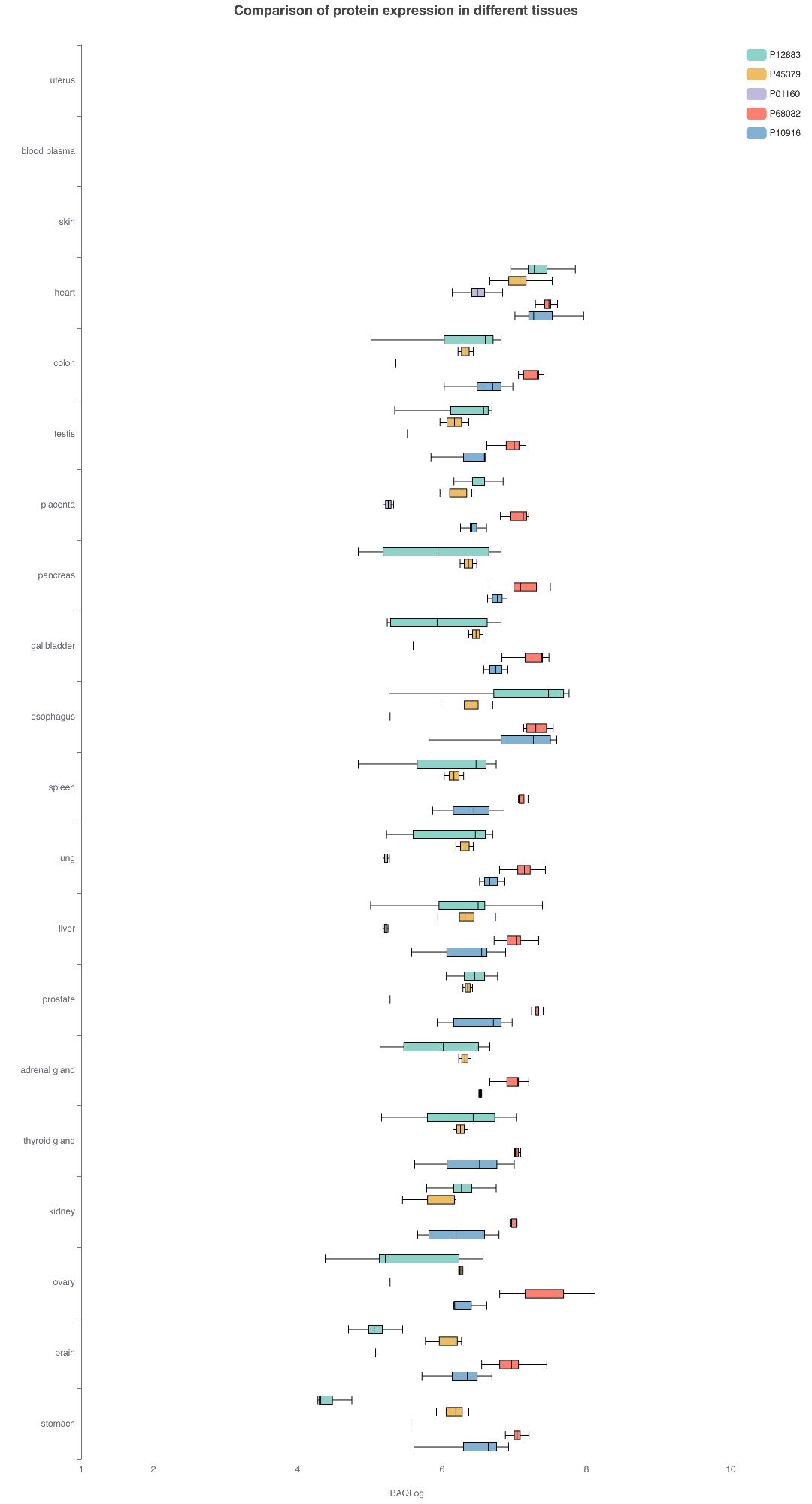

If you ask chatgpt to give you 5 proteins highly expressed in the human heart it responds: P12883, P45379, P01160, P68032, P10916. #quantmsquantms.org/ae/tissues?p...

Creazy idea: for those papers reanalysing large collections of datasets to put in the author list something like "Data Contributors", making easy for the authors that generate the original data to add the paper to their CV. Trying to give valuable Credit to the data submitters. What do you think?

We have developed a new python command-line tool to generate the PRIDE checksum files www.ebi.ac.uk/pride/markdo... this tool could help you to produce outside the PX submission tool your checksum.txt, nice for big submissions. Our target in the future is those submissions

Nice introduction in Chinese about quantms and what you can do with it in the quantms channel. www.youtube.com/watch?v=w3_b...#proteomics#massspectrometry#bigdata

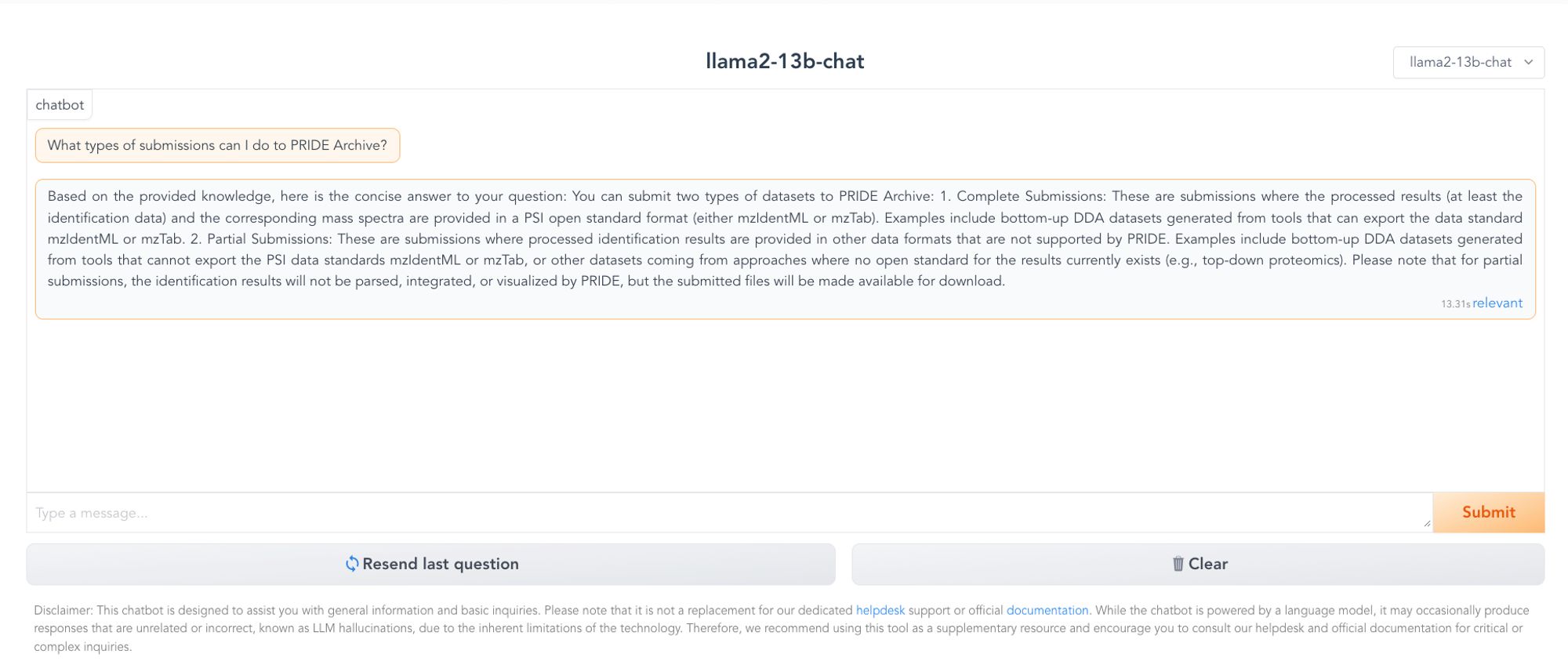

A chatbot to help our users to interact with PRIDE documentation. Example 👇. We are using #llama2#chatglm2-6b@benneely.com@alejandrobrenes.bsky.social#MS community. RT/

I'm honestly thinking about playing for chatgpt 4. I have rule, don't pay to work, but I think I will break it.

As datasets becomes bigger, more samples, more msruns we should move out of xml, csv txt files, and more into hdf5, parquet,sqlite etc.