Curious to know more? Read @philipcball.bsky.socialpress.uchicago.edu/ucp/books/bo...

“Bold and intriguing.”—Wall Street Journal • “Penetrating. . . . Provocative and profound.”—Publishers Weekly (starred review) • “Offers plenty of food for thought.”—Kirkus Reviews (starred review) “...

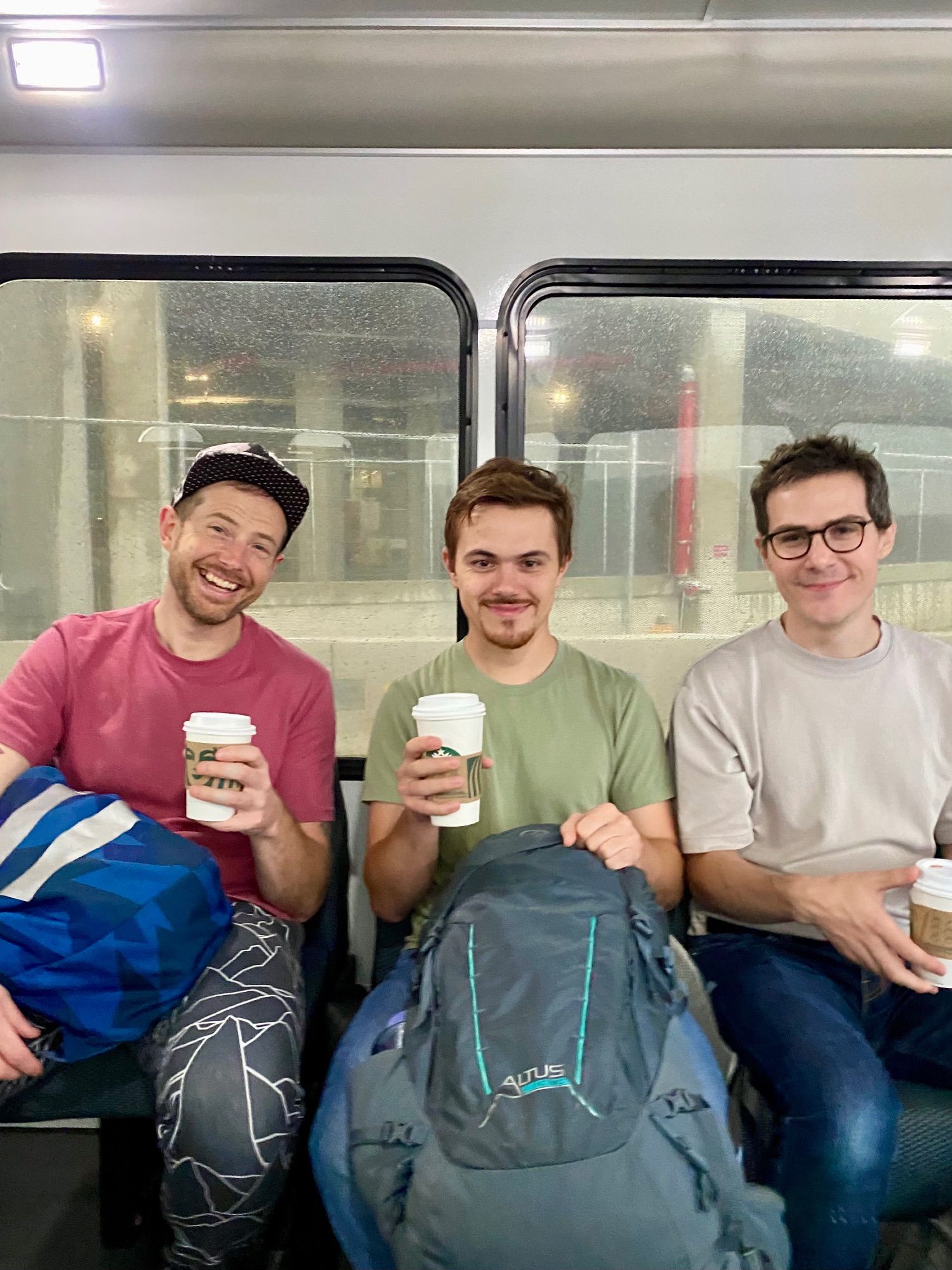

But my favorite moment got to be enjoying Starbucks coffee with my lab @tyrellturing.bsky.social@oliviercodol.bsky.social , Roman Pogodin as we celebrated our arrival in the Big 🍎. A perfect blend of science, caffeine, and NYC energy!

Overall, NAISys felt like an extended summer school - small enough for meaningful interactions and focused on diverse NeuroAI topics. Kudos to the organizers, @tonyzador.bsky.social@tyrellturing.bsky.social for an amazing event!

A highlight of the conference was meeting people passionate about interdisciplinary research, especially during the poster session. It was great to connect with @computingnature.neuromatch.social.ap.brid.gy@neuromatch.bsky.social interactions.

This past week, I had a fantastic time attending NAISys at @cshlaboratory.bsky.social - a NeuroAI conference. It was amazing to learn about various topics like genomic coding, neuromorphic hardware, and in-context learning at the home to eight Nobel Prize winners in Physiology or Medicine! 1/N

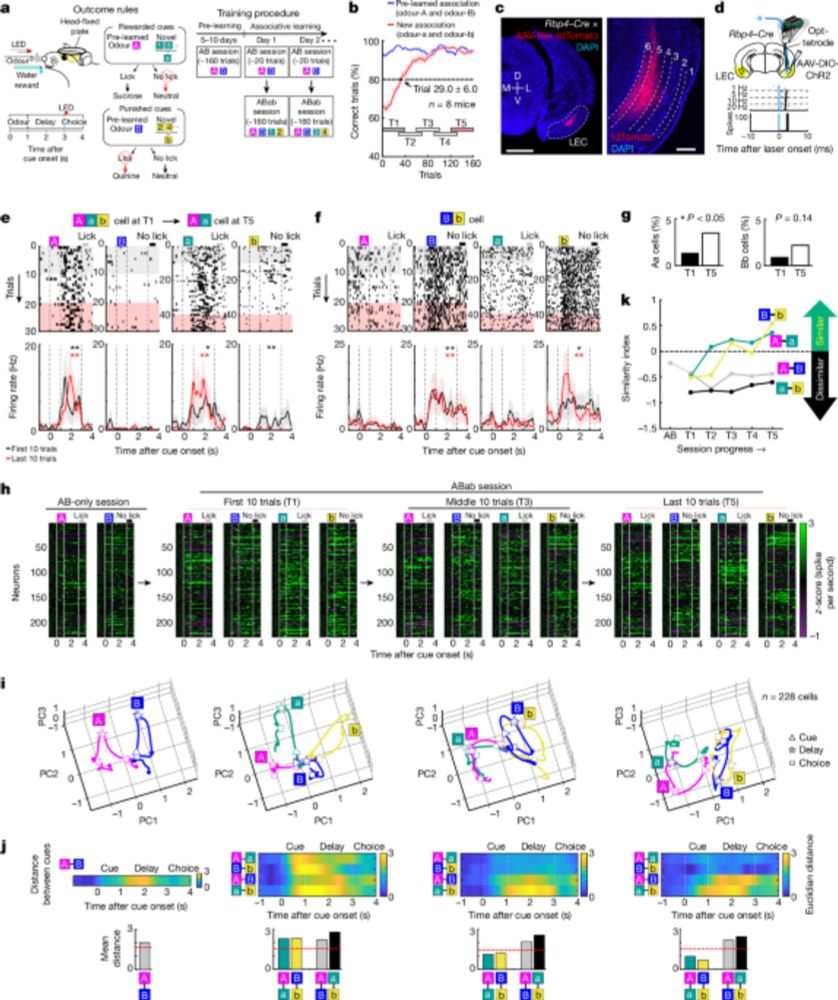

Evidence that entorhinal cortex and prefrontal cortex (in mice, sorry) work together to encode outcomes in associative learning: www.nature.com/articles/s41... Studying this kind of outcome monitoring is going to be critical to understand the losses at play in the brain! 🧠📈

The bidirectional loop circuit between layers 5/6 of the lateral entorhinal cortex and the medial prefrontal cortex encodes item–outcome associative memory in mice.

First episode of our new podcast is out! Episode 1: "What is Intelligence?" It's hard to fully answer this question, but we had some great discussions about it with superstars Alison Gopnik and John Krakauer. Give it a listen! complexity.simplecast.com/episodes/nat...

An exciting problem to think about. It seems to suggest that the networks are gravitating towards a universal understanding of reality. Is this behavior indicating a natural emergence of Occam's razor principles at play, guiding the networks towards the simplest, most efficient representations?

Today's Q for discussion by the #NeuroAIarxiv.org/abs/2301.00234 Do we have clear evidence of rapid learning with no synaptic changes in the brain? If so, when/where?

With the increasing capabilities of large language models (LLMs), in-context learning (ICL) has emerged as a new paradigm for natural language processing (NLP), where LLMs make predictions based...