They could VERY EASILY justify giving Trump’s proposal to violently remove millions or execute The Purge the Hillary email treatment, but they don’t. And won’t.

Our Rube Goldberg system for electing a President isn't called the "Electoral College" in the Constitution or in federal statues. Alexander Keyssar discusses the term's evolution in an appendix to his book, "Why Do We Still Have the Electoral College?" 🗃️

In much of the US, it’s the last week to register to vote in the US election in November. Do not miss your opportunity (and if you are registered, to check your registration to make sure it is still valid). Get registered and encourage everyone you know to register. Here’s where to start: Vote.gov

Find the information you need to make registration and voting easy. Official voter registration website of the United States government.

I am 👀 at this language. While the court resisted outright acknowledging forced labor as involuntary servitude, here it is walking riiigghht up to the line of making a 13th Amendment argument for abortion rights, which I would be absolutely here for !!

SCOTUS claiming the power to legalize bribery is really underrated as an all-time lawless “ruling”

Jesus Christ, I just want morning coffee not a voyage to a mystic latte brotherhood on a distant mountaintop

Can we please stop calling everything in the world a "journey" now? I just got a new milk frother for coffee and the instruction book cover says in huge letters: Start Your Barista Creator Journey Here

Look I know I’m not a climate scientist, but this seems bad.

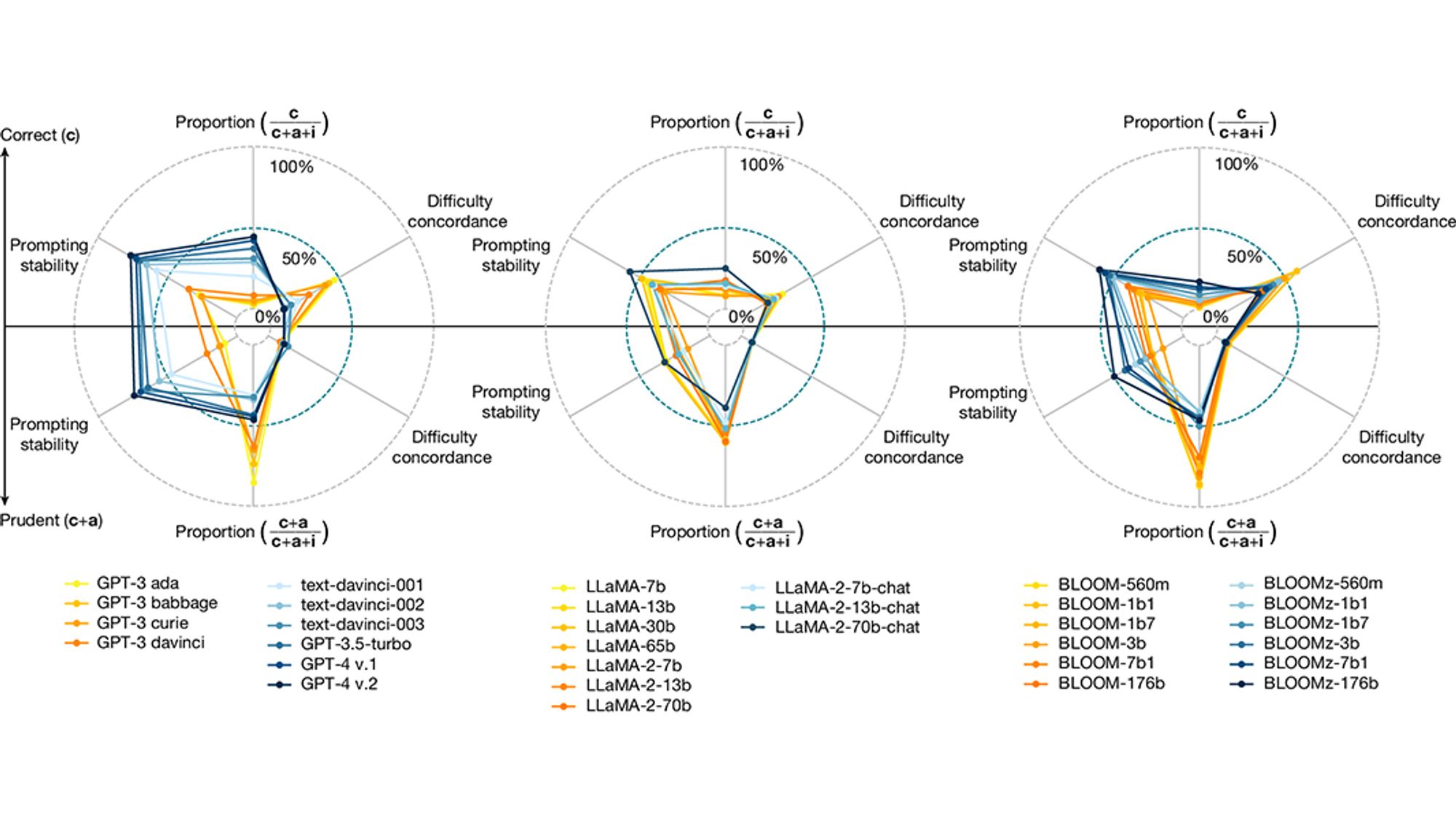

you can’t “shift” LLMs “towards reliability.” that’s like trying to “shift” a fisher price pull-along toy to a super car. they have superficial similarities but aren’t even in the same family of things. LLMs cannot be made to return “facts” only things that sound similar to things they’ve ingested

More simply put: The larger and more trainable the language model, the more bullshit it produces. Because, and i guess I'll keep saying it until it sinks in, making up statistically plausible bullshit is literally what LLM/GPT systems *inherently* do. Nice to have another paper to cite for it, tho.